Related To: [Metaphor Is Narrative]

Content Summary: 1600 words, 16 min read.

Ambassadors Of Good Taste

I concluded my discussion of metaphor with three takeaways:

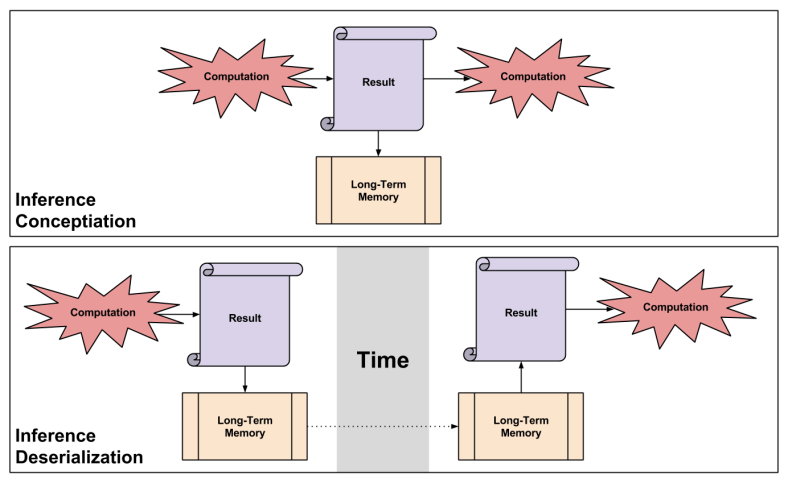

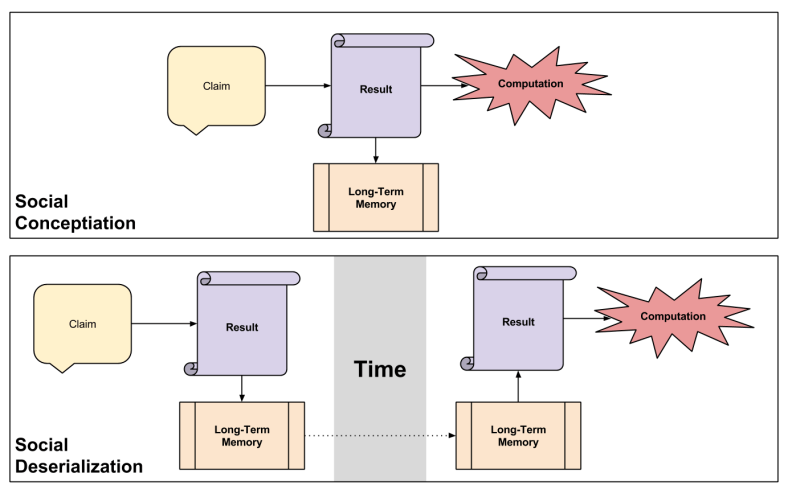

- Metaphor relocates inference: we reason about abstract concepts using sensorimotor processes.

- Metaphor imbues communication with affective flair or style.

- Weaving metaphors together is narrative paint.

Let me build on such theses with the following aphorisms:

- Metaphors which generate accurate empirical predictions are apt. Not all metaphors have this quality.

- Metaphorical aptitude is a continuous scale, with complex empirical predictions generating higher scores.

- Improving metaphorical aptitude is a design process.

- Scientists who immerse their empirical results into this process are, in my language, ambassadors of good taste.

This post strives to develop a metaphor with high aptitude. You are witness to what I mean by “design process”.

Anatomy Of A Metaphor

Concept-space is useful because it sheds light on the nature of learning. The central identifications are:

- A World Model Is A Location.

- The Reasoner Is A Vehicle

- Inference Is Travel

Our unconscious selves already use this metaphor frequently (c.f. phrases like “I’m way ahead of you.”) We aren’t inventing something so much as refining it.

To these three pillars, another identification can be successfully bolted on:

- Predictive Accuracy Is Height

As we will see, pursuing knowledge really is like climbing a mountain.

Need For Cognition is Frequency Of Travel

Let’s talk about need for cognition: that personality trait that disposes some people towards critical thinking.

Those who know me, know how deeply I am driven to interrogate reality. Why am I like this? My answer:

I pursue deep questions because I tell myself I am curious → I tell myself I am curious because I pursue deep questions.

Such identity bootstrapping appears in other contexts as well. For example:

I am generous with my time because I tell myself I am selfless → I tell myself I am selfless because I am generous with my time.

Curiosity is an itch, active curiosity is scratching it. In terms of our metaphor:

- If inference is travel, actively curious people are those who travel more frequently.

Intelligence is Vehicular Speed

Where does intelligence – that mental ability linked to abstraction – fit? Consider the following:

- Although our society tends to lionize IQ as a personal trait, intelligence is mostly (50-80%) genetic. High-IQ parents tend to have high-IQ children, and vice versa.

- What’s more, intelligence is highly predictive of success in life. It is so important for intellectual pursuits that eminent scientists in some fields have average IQs around 150 to 160. Since IQ this high only appears in 1/10,000 people or so, it beggars coincidence to believe this represents anything but a very strong filter for IQ.

- In other words, Nature is not going to win any awards for egalitarianism any time soon.

We interpret intelligence as follows:

- If the reasoner is a vehicle, intelligence is the speed of her vehicle.

If this topic conjures up existential angst (“I’ll never study again!” :P) check out this post. Speaking from my own life, my need for cognition is comparatively stronger than my intelligence quotient. In the tortoise-vs-hare race, I am the tortoise.

On Education And Directional Calibration

One might reasonably complain that learning is not a solitary activity – our metaphor is too individualistic.

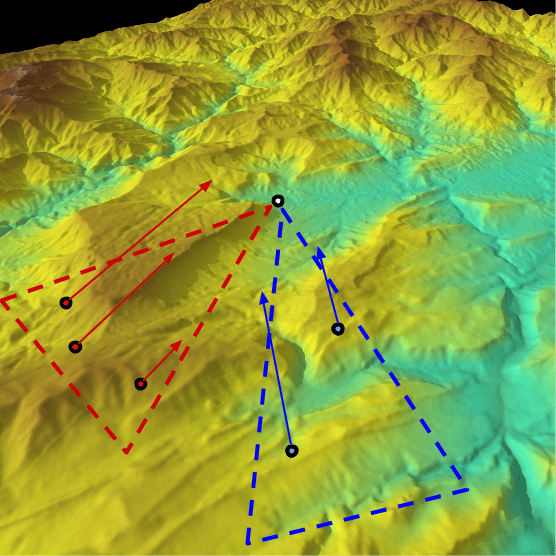

Let’s fix it. Consider the classroom. A teacher typically knows more than her students; in our metaphorical space, she is elevated above them. But the incomprehensible size of concept-space entails three uncomfortable facts:

- Every student resides in a different location.

- Knowing the precise location is computationally infeasible (even one’s own location).

- Without such knowledge, discovering to that student’s optimal path up the mountain is also infeasible.

Fortunately, location approximations are possible. Imagine a calculus professor with five students. Three students are stuck on the mathematics of the chain rule, the other two don’t grok infinitesimals. We might imagine the first group in the SW direction and the second are S-SE:

Without knowing anyone’s precise location, the professor (white dot) can provide the red group with worked examples of the chain rule (direct to the NE) and the blue group with stories to motivate the need for infinitesimals (direct to N-NW). While such directional calibration is imprecise, it nevertheless gets them closer to the professors’ knowledge (amplifying their predictive power).

Notice how each student travels along different speeds (intelligence) and frequencies (work ethic).

Notice how each student travels along different speeds (intelligence) and frequencies (work ethic).

On Inferential Distance

If the process of building World Models is a journey, the notion of inferential distance becomes relevant.

Imagine reading two essays and then being quizzed for comprehension. Both have the same word count; one is written by a theoretical physicist, the other by a journalist. The physicist’s writings would probably take longer to understand. But why is this so?

Surely there is a greater inferential distance between us and the theoretical physicist. Is it so surprising that traveling greater distances consume more time?

This intuition sheds light on a common communication barrier, which Steven Pinker frames well:

Why is so much writing so bad?

The most popular explanation is that opaque prose is a deliberate choice. Bureaucrats insist on gibberish to cover their anatomy. Plaid-clad tech writers get their revenge on the jocks who kicked sand in their faces and the girls who turned them down for dates. Pseudo-intellectuals spout obscure verbiage to hide the fact that they have nothing to say, hoping to bamboozle their audiences with highfalutin gobbledygook.

But the bamboozlement theory makes it too easy to demonize other people while letting ourselves off the hook. In explaining any human shortcoming, the first tool I reach for is Hanlon’s Razor: Never attribute to malice that which is adequately explained by stupidity. The kind of stupidity I have in mind has nothing to do with ignorance or low IQ; in fact, it’s often the brightest and best informed who suffer the most from it.

The curse of knowledge is the single best explanation of why good people write bad prose. It simply doesn’t occur to the writer that her readers don’t know what she knows—that they haven’t mastered the argot of her guild, can’t divine the missing steps that seem too obvious to mention, have no way to visualize a scene that to her is as clear as day.

The curse of knowledge expects short inferential distances. Why does this bias (not another) live in our brains?

As we have seen, estimating location is expensive. So the brain takes a shortcut: it uses a location it already knows about (its own) and employs differences between the Self and the Other to estimate distance. Call this self-anchoring. But the brain isn’t aware of all differences, only those it observes. Hence the process of “pushing out” one’s estimation of Other Locations typically doesn’t go far enough… the birthplace of the curse.

On Epistemic Frontiers, Fences, and Cliffs

It is tempting to view cognition as transcendent. Cognition transcendence plays a key role in debates over free will debates, for example. But I will argue that barriers to inference are possible. Not only that, but they come in three flavors.

Intelligence is speed, but is there a speed limit? There exist physical reasons to answer “yes”; instantaneous learning is as absurd as physical teleportation. Just as a light cone constrains how physical event spreads through the universe, we might appeal to a cognition cone. Our first barrier to inference, then, is running out of gasoline. Death represents an epistemic frontier, with intellectually gifted people enjoying wider frontiers. Arguably, the frontier of anterograde amnesiacs is much shorter, defined by the frequency at which their memories “reset”.

If most education eases inference, we might imagine other social devices that retard that very same movement. Examples abound of such malicious, man-made epistemic fences. While conspiracy theories typically rely on naive models of incentive structures, other forms of information concealment plague the world. Finally, people steeped in cognitive biases (e.g., cult members within a happy death spiral) cannot navigate concept-space normally.

Epistemic frontiers need not concern us overly much (e.g., educational inefficiencies inhibit progress more than short lifespans). Epistemic fences are more malicious, but we can still dream of moving away from tribalism. What about permanent barriers? Might naturally-occurring epistemic cliffs inhabit our intellectual landscape? Yes. Some of the more well-known cliffs include Godel’s Incompleteness Theorems, and the Heisenberg Uncertainty Principle.

We have seen three types of inferential stumbling blocks: finite frontiers, man-made fences, and natural cliffs. But consider what it means to reject cognition transcendence. Two theses from Normative Therapy were:

- Motivation: normative structures should point towards their ends in motivationally-optimal ways.

- Despair: It is not motivationally-optimal to be held to a normative structure beyond one’s capacities.

If these principles seem agreeable, it may be time to reject arguments of the form “all people should believe X”.

Takeaways

In this post, we developed a metaphor of epistemic topography, or concept-space:

- A World Model Is A Location.

- The Reasoner Is A Vehicle

- Predictive Accuracy Is Height

- Intelligence Is Vehicular Speed

- Inference Is Travel

- Need For Cognition Is Frequency Of Travel

We then used this five-part metaphor to shed light on the following applications:

- Education is the art of directing people whose locations you do not know towards higher peaks.

- The Curse Of Knowledge can be explained as incomplete extrapolating from one’s own conceptual location.

- The inferential journey can be blocked by three kinds of barriers: finite frontiers, man-made fences, and natural cliffs

- These facts render arguments of the form “all people should believe X” dubious.