Part Of: Neuroeconomics sequence

Content Summary: 1100 words, 11 min read

Historical Context

William Jaynes (1842-1910), the “father of American psychology”, was also a world-renowned philosopher, who together with CS Peirce and John Dewey, founded the philosophical school of pragmatism. This illustrates that, in the early days psychology (along with many other sciences) was more closely interwoven with philosophy.

This connection can be seen into the 1930s, when two related movements conquered the intellectual zeitgeist. In analytic philosophy, logical positivism from the Vienna Circle quickly gained traction. Logical positivism partitioned language into two components: synthetic (“this leaf is green”) and analytic statements (“all men are bachelors”). Further, it relied on the verification principle, that concepts are only meaningful by virtue of the operations used to measure them.

Concurrently, BF Skinner inaugurated the research tradition of behaviorism, which focused on relationships between Stimulus and Response (SR associations). Influenced by positivism, Skinner also promoted radical behaviorism, which insisted that talk about subjective experience, and all mental phenomena, had no meaning.

In 1951, Quine penned his Two Dogmas of Empiricism, which marked the death knell of logical positivism. And in 1967, Noam Chomsky wrote his Review Of Skinner’s Verbal Behavior, a devastating takedown of radical behaviorism. Chomsky helped usher in the cognitive revolution, with its key metaphor of brain as computer. Importantly, just as we can inspect the inner workings of a computer, we can also hope to learn about events that transpire between our ears.

I disagree with the philosophy of radical behaviorism. But the empirical results of behaviorism persist, and demand explanation. So today, let’s explore what this research programme learned some sixty years ago.

Classical Conditioning

The most important result from behaviorist experiments is conditioning, a robust form of associative learning. It comes in two flavors:

- Classical conditioning is about learning in behavior-irrelevant situations (where e.g., a rat’s behavior doesn’t affect foot shock).

- Instrumental conditioning is about learning in behavior-relevant situations (where e.g., a rat’s behavior affects foot shock).

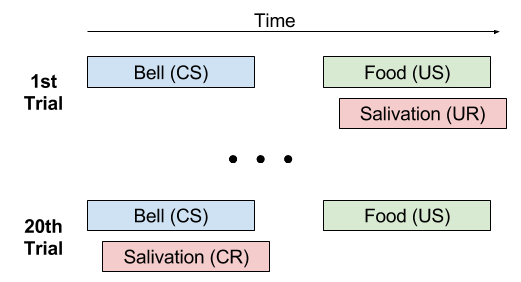

To illustrate the former, let’s turn to a famous experiment by Pavlov. Some behaviors seem innate: you don’t need to teach a rat to dislike pain, and you don’t need to teach a dog to salivate when presented with food. Call these innate associations unconditioned stimulus US and unconditioned response UR. In contrast, neutral stimuli fail to elicit meaningful behavior.

Pavlov’s insight: if you consistently ring a bell before providing food, the food-salivation association will change! The salivation reflex will travel forward in time, towards the conditioning stimulus CS (in this case, the bell).

Note the CS bell serves as a predictor of reward. Since salivation readies the mouth for digestion, it makes sense for the brain to initiate this preparation as soon as it learns of an imminent meal.

On Carrots And Sticks

Have you ever heard the expression “should I use a carrot or stick”? This idiom derives from a cart driver dangling a carrot in front of a mule and holding a stick behind it. The claim is that there are basically two routes to alter behavior: positive and negative feedback.

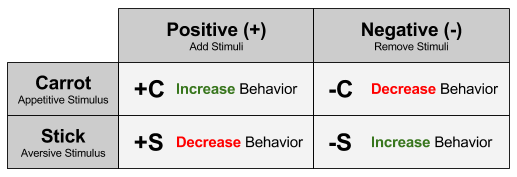

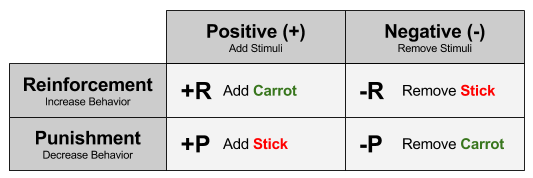

But this idiom is incomplete. We must also consider the effect of removing carrots & sticks. So there are four ways to alter behavior:

Let us reorganize this taxonomy, by grouping actions that increase or decrease behavior, respectively:

Instrumental Conditioning

Of course, proverbs can be wrong! For example, the “sugar high” myth remains alive and well, at least in my social circles. Do “carrots and sticks” really alter behavior?

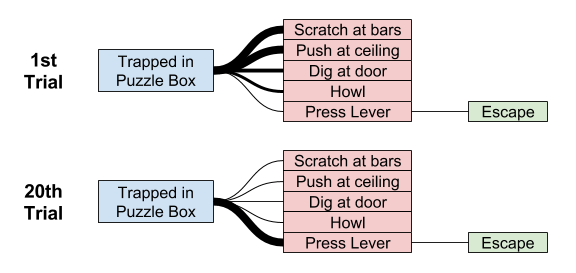

Back in 1898, Thorndike conducted his Puzzle Box experiment, which trapped a cat in a small space, with a hidden lever that facilitated escape. On the first trial, the cat relies heavily on its innate escape behaviors: scratching at bars, pushing at ceiling, etc. However, the only behavior that facilitated escape was pressing a lever. After repeating this experiment several times, the cat has learned to not waste time scratching etc: it presses the lever immediately.

This is proof of negative reinforcement: after pressing a lever, an unpleasant state (confinement) was removed, and frequency of lever-pressing subsequently increased.

By now, strong evidence supports instrumental learning from all four kinds of reinforcements and punishments. The Law of Effect expresses this succinctly:

Responses that produce a satisfying effect in a particular situation become more likely to occur again in that situation, and responses that produce a discomforting effect become less likely to occur again in that situation.

This effect occurs in nearly all biological organisms! This suggests that the brain mechanisms underlying this ability are highly conserved across species. But I will leave my remarks on the biological substrate of conditioning for another post. 🙂

Shaping

Reinforcement and punishment are powerful learning tools. One successful research programme of behaviorism is behavioral control: engineering the right sequence of positive & negative outcomes to dictate an organism’s behavior.

Shaping is an important vehicle for behavioral control. If you desire an animal to exhibit some behavior (even one that would never occur naturally), you simply apply differential reinforcement of successive approximations. Take, for example, rat basketball:

Animal training relies heavily on shaping techniques. In the words of BF Skinner,

By reinforcing a series of successive approximations, we bring a rare response to a very high probability in a short time. … The total act of turning toward the spot from any point in the box, walking toward it, raising the head, and striking the spot may seem to be a functionally coherent unit of behavior; but it is constructed by a continual process of differential reinforcement from undifferentiated behavior, just as the sculptor shapes his figure from a lump of clay.

The topic of behavioral control is often met with discomfort: don’t such findings empower manipulative people? I want to add two comments here:

- You don’t see primates shaping each other’s behaviors, despite its obvious adaptive values. Why? I suspect primates like ourselves possess emotional software that detects and punishes social manipulation. Specifically, I suspect our intuitions about personal autonomy and moral inflexibility evolved for precisely this purpose.

- Shaping, and associative learning, are not unlimited in scope. You can shape a rat to play basketball, but shaping will completely fail to produce e.g., self-starvation. The brain is not a tabula rasa, and it cannot be stretched beyond its biological constraints.

Takeaways

- Philosophically, radical behaviorism collapsed in the 1970s. But it left behind important empirical results.

- A wide swathe of animals exhibit classical conditioning, which is learning to associate innate responses with (previously meaningless) predictors.

- Extensive evidence for also suggests the brain can perform instrumental conditioning, a more behaviorally-relevant form of learning.

- Specifically, the Law of Effect states that satisfying outcomes increase the preceding behavior, and vice versa.

- The instrumental conditioning technique of shaping is still used today by animal trainers to install utterly novel behaviors in animals, such as rats playing basketball. 🙂

For another look at conditioning, I recommend this video.

Until next time.