What’s So Important About Graphs?

Of all the conceptual devices in my toolkit, hyperpoints and graphs are employed the most frequently. I have explained hyperpoints previously; let me today explain What’s So Important About Graphs?

Human beings tend to divide the world into states versus actions. This metaphor is so deeply ingrained in our psyche that it tends to be taken for granted. Graphs are important because they visualize this state-action dichotomy: states are dots, actions are lines.

Put crudely, graphs are little more than connect-the-dots drawings. Now, dear reader, perhaps you do not yet regularly contemplate connect-the-dots in your daily life. Let me paint some examples to whet your appetite.

- Maps are graphs. Locations are nodes, paths are the edges that connect them.

- Academic citations are a graph. Papers are nodes, citations are the edges that connect them.

- Facebook is a graph. People are nodes, friendships are the edges that connect them.

- Concept-space is a graph. Propositions are nodes, inference are the edges that connect them.

- Causality is a graph. Effects are nodes, causes are the edges that connect them.

- Brains are graphs. Neurons are nodes, axons are the edges that connect them.

The above examples are rather diverse, yet we can reason about them within a single framework. This is what it means to consolidate knowledge.

Graph Theory: Applications & Limitations

It turns out that a considerable amount of literature lurks beneath each of our examples. Let’s review the highlights.

Once upon a time, Karl Popper argued that a scientific theory is only as strong as it is vulnerable to falsification. General relativity made the crazy prediction of gravitational lensing which was only later confirmed experimentally. One reason to call astrology “pseudoscience” is in its reluctance to produce such a vulnerable prediction.

But simple falsification doesn’t fully describe science: healthy theories can survive certain kinds of refutations. How? W. V. O. Quine appealed to the fact that beliefs cannot be evaluated in isolation; we must instead view scientific theories as a “web of belief”. And this web can be interpreted graphically! Armed with this interpretation, one can successfully evaluate philosophical arguments involving Quine’s doctrine (called confirmation holism) based on technical constraints on graphical algorithms.

The modern incarnation of confirmation holism occurs when you replace beliefs with degrees-of-belief. These probabilistic graphical models are powerful enough to formally describe belief propagation.

But even probabilistic graphical models don’t fully describe cognition: humans possess several memory systems. Our procedural memory (“muscle memory”) is a graph of belief, and our episodic memory (“story memory”) is a separate graph of belief.

How to merge two different graphs? Graph theory cannot compose graphs horizontally.

Switching gears to two other examples:

- The formal name for brains-are-graphs is neural networks. They are the lifeblood of computational neuroscientists.

- The formal name for Facebook-is-a-graph is social networks. They are vital to the research of sociologists.

How might a neuroscientist talk to a sociologist? One’s network represents the mental life of a person; the other, the aggregate lives of many people. We want to say is that every node in a social graph contains an entire neural graph.

How to nest graphs-within-graphs? Graph theory cannot compose graphs vertically.

The Categorical Landscape

What are categories? For us, categories generalize graphs.

A directed graph can be expressed G = (V, E); that is, it contains a set of nodes, and a set of edges between those nodes.

A small category C = (O, M); that is, it contains a set of objects, and a set of morphisms between those objects.

Categories endow graphs with additional structure.

- Category Theory require the notion of self-loops: actions that result in no change.

- In Graph Theory, there is a notion of paths from one node to another. Category Theory promote every path into its very own morphism.

- Category Theory formalizes the notion of context: objects and morphisms live within a common environment; this context is called a “category”.

As an aside, directed graphs require only one edge between graphs, and no self-loops. We could tighten the analogy yet further by comparing categories to quivers (directed graphs that don’t forbid parallel edges and self-loops).

But enough technicalities. Time to meet to your first category! In honor of category theory’s mathematical background, allow me to introduce Set. In Set, objects are sets, and morphisms are functions. Here is one small corner of the category:

Self-loops 1A, 1B, and 1C change nothing; they are special kind of self-loops called identity morphisms. Since such things exist on every node, we will henceforth consider their existence implicit.

Self-loops 1A, 1B, and 1C change nothing; they are special kind of self-loops called identity morphisms. Since such things exist on every node, we will henceforth consider their existence implicit.

Recall our requirement that every path has a morphism. The above example contains three paths:

- π1 = A → B

- π2 = B → C

- π3 = A → B → C

The first two paths are claimed by f and g, respectively, but the third is unaffiliated. For this to qualify as a category, we must construct for it a new morphism. How? We create h : A → C via function composition:

h(x) = g(f(x)) = [f(x)]-1 = 2x-1.

We also require morphism composition to be associative. Another example should make this clear:

Besides g(f(x)), we can also write function composition as f●g (“f then g”). In the above, we have:

- i(x) = f●g

- j(x) = g●h

- k(x) = f●g●h

With this notation, we express our requirement for morphism associativity as

- k = (f●g)●h = f●(g●h).

- That is, k = i●h = f●j.

- That is, “you can move from A to D by travelling either along i-then-h, or along f-then-j”.

Harden The Definition

Let me be precise. Categories contain two types of data:

- A set of objects O.

- A set of morphisms M such that m : A → B

The set of morphisms is required to contain the following two sets:

- A set of identity morphisms 1 such that 1A : A → A

- A set of composition morphisms such that for every f : A → B and g: B → C, there is a morphism h: A → C.

The set of morphisms is required to respect the following two rules:

- Identity: composing a morphism with an identity does nothing. f●1 = f = 1●f

- Associativity: the order in which you compose functions doesn’t matter. (f●g)●h = f●(g●h)

Connecting To Group Theory

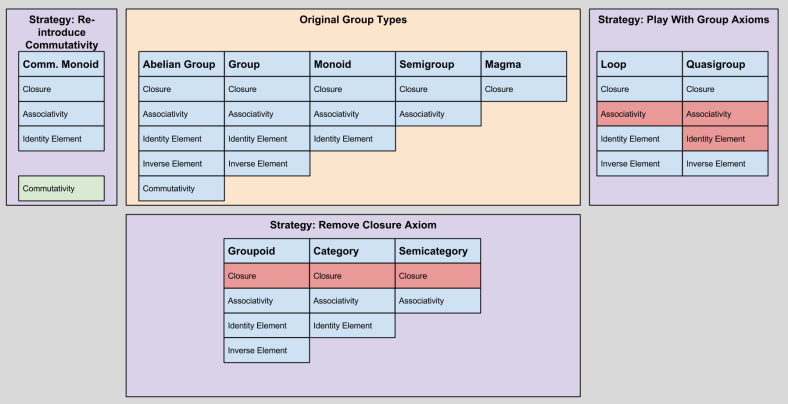

Recall that groups define axioms governing set operations. A group might choose to accept or reject any of the following axioms:

- Closure

- Associativity

- Identity Element

- Inverse Element

- Associativity

We can now better appreciate our taxonomy from An Introduction To Group Theory:

Take a second to locate the Category group. Do you see how its two axioms align with our previous definition of a category?

Notice that in group theory, categories are kind of a degenerate monoid. Why have we removed the requirement for closure?

We can answer this question by considering the category SomeMonoid? It turns out that this category has only one object (with each of its member elements as self-loops). This is the categorical consequence of closure. We do not require closure in our category theory because we want license to consider categories with a multitude of objects.

Takeaways

In this article, I introduced graphs, and used human memory and social vs. neural networks to motivate two limitations of graph theory:

- How to merge two different graphs? Graph theory cannot compose graphs horizontally.

- How to nest graphs-within-graphs? Graph theory cannot compose graphs vertically.

I then introduced you to Category Theory, showing how it both generalizes graph theory and connects to abstract algebra.

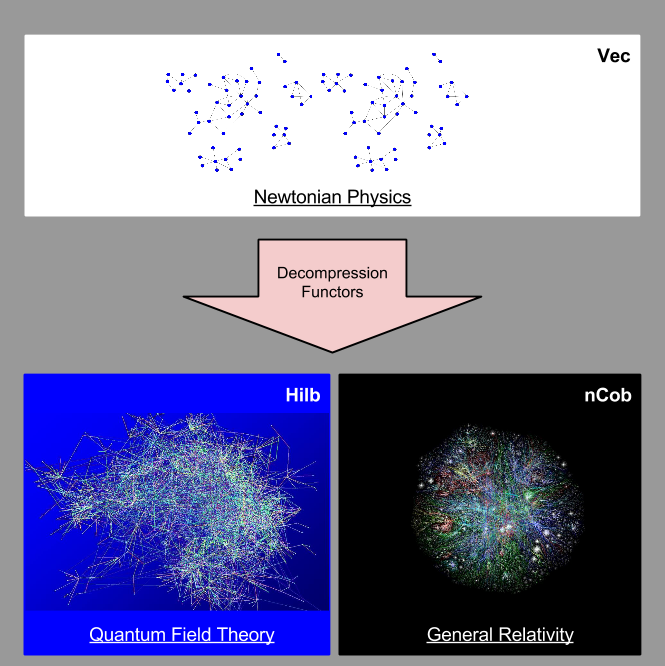

In the final half of this introduction, I will discharge my above two commitments. Specifically, next time I will show how:

- The concept of functor permits horizontal composition of categories.

- The distinction between “inside” and “outside” views facilitates vertical composition of categories.