Part Of: Algorithmic Game Theory sequence

Related To: An Introduction To Prisoner’s Dilemma

Content Summary: 1000 words, 10 min read

The Paradox

Newcomb’s Paradox is a thought experiment popularized by philosophy superstar Robert Nozick in 1969, with important implications for decision theory:

A superintelligence from another galaxy, whom we shall call Omega, comes to Earth and sets about playing a strange little game. In this game, Omega selects a human being, sets down two boxes in front of them, and flies away.

Box A is transparent and contains a thousand dollars.

Box B is opaque, and contains either a million dollars, or nothing.

You can take both boxes, or take only box B.

And the twist is that Omega has put a million dollars in box B iff Omega has predicted that you will take only box B.

Omega has been correct on each of 100 observed occasions so far – everyone who took both boxes has found box B empty and received only a thousand dollars; everyone who took only box B has found B containing a million dollars. (We assume that box A vanishes in a puff of smoke if you take only box B; no one else can take box A afterward.)

Before you make your choice, Omega has flown off and moved on to its next game. Box B is already empty or already full.

Omega drops two boxes on the ground in front of you and flies off.

Do you take both boxes, or only box B?

Well, what’s your answer? 🙂 Seriously, make up your mind before proceeding. Do you take one box, or two?

Robert Nozick once remarked that:

To almost everyone it is perfectly clear and obvious what should be done. The difficulty is that people seem to divide almost evenly on the problem, with large numbers thinking that the opposing half is just being silly.

The disagreement seems to stem from the contradiction of two strong intuitions: one “altruistic” intuition in favor of 1-box, the other 2-box intuition is more “selfish”. They are, respectively:

- Expectation Intuition: Take the action with the greater expected outcome.

- Maximization Intuition: Take the action which, given the current state of the world, guarantees you a better outcome than any other action.

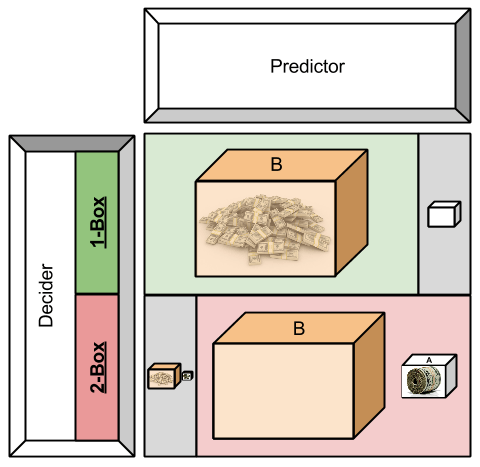

Allow me to visualize the mechanics of this thought experiment now (note that the Predictor is a not-necessarily-omniscient version of Omega):

Still with me? 🙂 Good. Let’s see if we can use game theory to mature our view of this paradox.

Game-Theoretic Lenses

Do you remember Introduction To Prisoner’s Dilemma? At one point in that article, we decomposed the problem into a decision matrix. We can do the same thing for this paradox, too:

From the perspective of the Decider, strategic dominance here cash out our Intuition #1: the bottom row always outperforms the top row.

However, the point of making the Predictor knowledgeable is that landing in the gray cells (top-right, bottom-left) become unlikely. Let us make the size of our boxes represent the chance that they will occur.

With a Predictor of infinite accuracy, the size of the gray cells becomes zero, and now 1-Boxing suddenly dominates 2-Boxing.

With a Predictor with bounded intelligence, what follows? Might some logic be constructed to describe optimal choices in such a scenario?

A Philosophical Aside

Taken from this excellent article:

The standard philosophical conversation runs thusly:

- One-boxer: “I take only box B, of course. I’d rather have a million than a thousand.”

- Two-boxer: “Omega has already left. Either box B is already full or already empty. If box B is already empty, then taking both boxes nets me $1000, taking only box B nets me $0. If box B is already full, then taking both boxes nets $1,001,000, taking only box B nets $1,000,000. In either case I do better by taking both boxes, and worse by leaving a thousand dollars on the table – so I will be rational, and take both boxes.”

- One-boxer: “If you’re so rational, why ain’cha rich?”

- Two-boxer: “It’s not my fault Omega chooses to reward only people with irrational dispositions, but it’s already too late for me to do anything about that.”

- One-boxer: “What if the reward within box B are something you could not leave behind? Would you still fall on your sword?”

Who wins, according to the experts? No consensus exists. Some relevant results, taken from a survey of professional philosophers:

Newcomb’s problem: one box or two boxes?

Accept or lean toward: two boxes 292 / 931 (31.4%) Accept or lean toward: one box 198 / 931 (21.3%) Don’t know 441 / 931 (47.4%)

Prediction Is Time Travel

There is a wonderfully recursive nature to Newcomb’s problem: the accuracy of the predictor stems from it modelling your decision machinery.

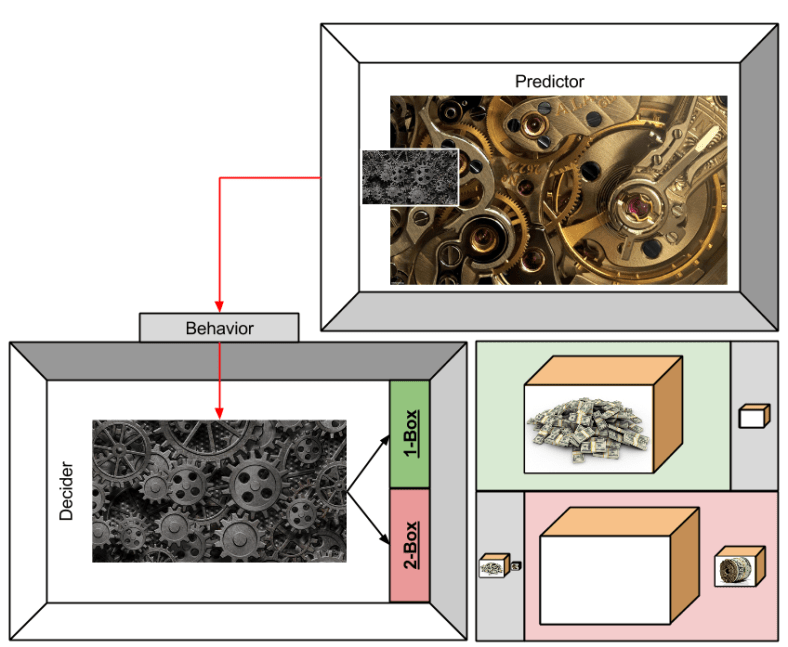

In this (more complete) diagram, we have the Predictor building a model of your cognitive faculties via the proxy of behavior.

As the fidelity of its model (gray image in the Predictor) improves as more information is pulled from the Decider (red arrow) the more perfect its predictive accuracy, (reduced size of the gray outcome-rectangles).

The Decider may say to herself:

Oh, if only my mind were such that the “altruistic” Expectation Intuition could win at prediction-time, but the “selfish” Maximization Intuition could win at choice-time.

Then, I would deceive the Predictor. Then, I would win $1,001,000.

But even if she possesses the ability to control her mind in this way, a perfect Predictor will learn of it. Thus, the Decider may reason:

If my changing my mind is so predictable, perhaps I might find some vehicle to change my mind based on the results of coin-flip…

What the Decider is trying to do, here, is a mechanism to corrupt the Predictor’s knowledge. How might a Predictor respond to such attacks of deterministic prediction?

Open Questions

This article represents more of an exploration, than a tour of hardened results.

Maybe I’ll produce revision #2 at some point… anyways, to my present self, the following are open questions:

- How can we get clear on the relations between Newcomb’s Paradox and game theory more generally?

- How might the “probability mass” lens be used to generalize this paradox to non-perfect Predictors

- How might the metaphysics of retroactive causality, or at least the intuitions behind such constructs, play into the decision?

- How much explanatory power does the anti-maximization principle behind 1-boxing (and the ability to precommit to such “altruistic irrationality”) say about human feelings of guilt or gratitude?

- How might this subject be enriched by complexity theory, and metamathetics?

- How might this subject enrich discussions of reciprical altruism and hyperbolic discounting?

Until next time.