Part Of: [Deserialized Cognition] sequence

Followup To: [Deserialized Cognition]

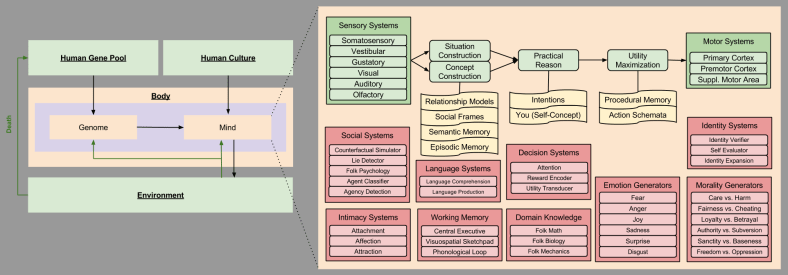

Preliminaries

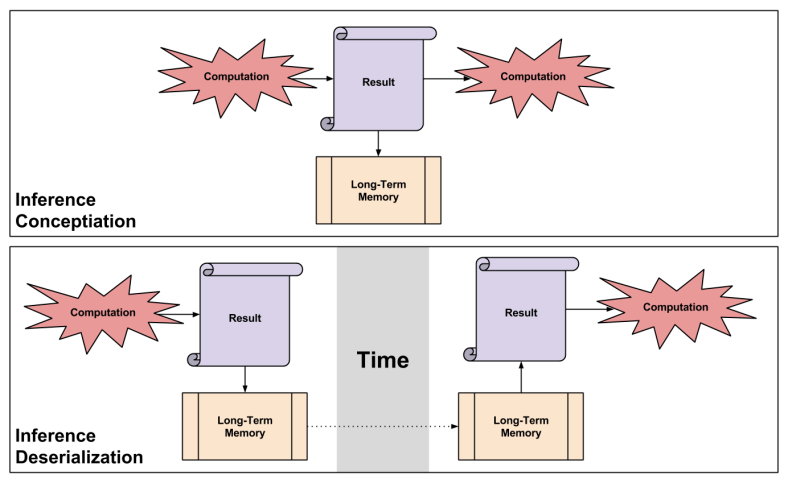

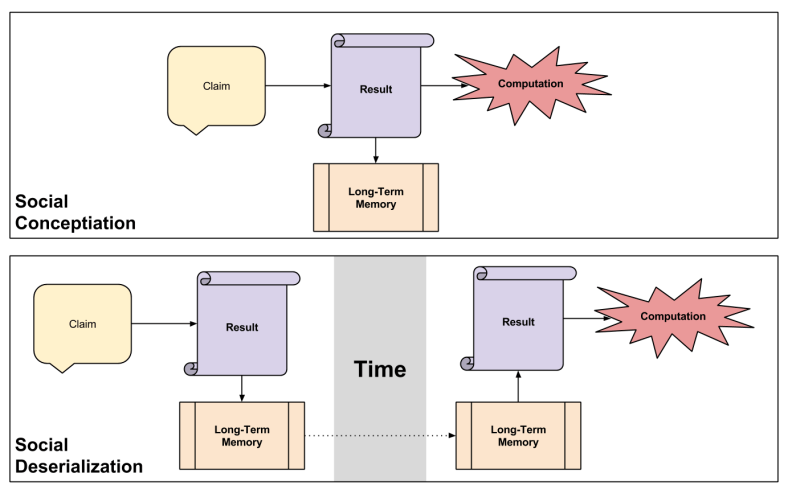

Two major differences exist between conceptiation and deserialization:

- Deserialization Delay: A time barrier exists between concept birth & use.

- Deserialization Reuse: The brain is able to “get more” out of its concepts.

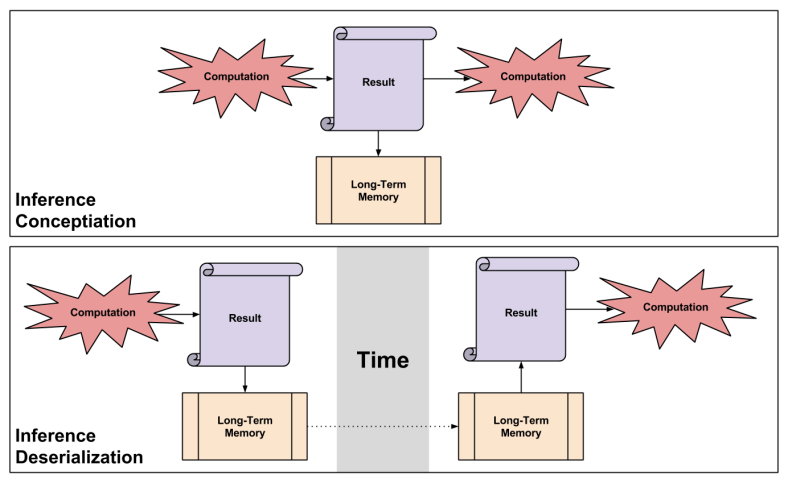

Inference Deserialization: Obsolescence Hazard

Let’s consider the deserialization delay within inference cognition modes:

If you think of an idea, and a couple hours later deserialize & leverage it, risk will (presumably) be minimal. But what about ideas conceived decades ago?

Your inference engines change over time. Here’s a fun example: Santa Claus. It is easy to imagine even a very bright child believing in Santa, given a sufficiently persuasive parent. The cognitive sophistication to reject Santa Claus only comes with time. However, even after this ability is acquired, this belief may be loaded from semantic memory for months before it is actively re-evaluated.

The problem is that every time your inference engines are upgraded (“versioned”), their past creations are not tagged as obsolete. What’s worse, you are often even ignorant of upgrades to the engine itself – you typically fail to notice (c.f., Curse Of Knowledge).

Potential Research Vector: The fact that deserialization decouples your beliefs from your belief-engines has interesting implications for psychotherapy, and the mind-hacking industries of the future. I can imagine moral fictionalism (moral talk is untrue, but useful to talk about) leveraging such a finding, for example.

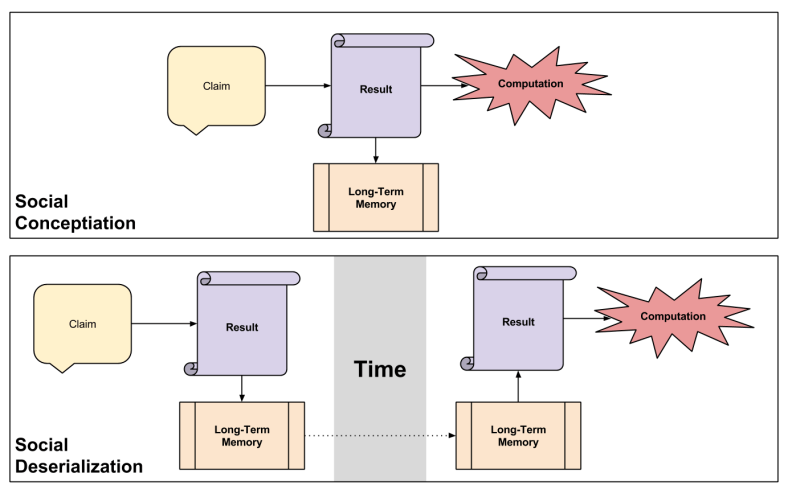

Social Deserialization: Epistemic Bypass Hazard

Let’s now consider deserialization reuse within social cognition modes:

Let me zoom into how social conceptiation is actually implemented in your brains. Do people believe every claim they hear?

The answer turns out to be… yes. Of course, you may disbelieve a claim; but to do so requires a later, optional process to analyze, and make an erasure decision about, the original claim. If you interrupt a person immediately after exposure to a social claim, you interrupt this posterior process and thereby increase acceptance irrespective of the content of the claim!

Social conceptiation, therefore, is less epistemically robust than inference conceptiation. Deserialization simply compounds this problem, by allowing the reuse of concepts that fail to be truth-tracking.

Potential Research Vector: Memetic theory postulates that, in virtue of your belief generation systems having a shape: that certain properties of the belief themselves influences cognition. I imagine that this distinction between concept acquisition modes would have nteresting implications for memetic theory.

How To Select Away From Hazardous Deserialization

Unfortunately, from the subjective/phenomenological perspective, there is precious little you can do to feel the difference between hazardous and truth-bearing deserializations. The brain simply fails to tag its beliefs in any way that would be helpful.

Before proceeding, I want to underscore one point: the process of selecting away from hazards cannot be usefully divided into a noticing step and a selection step. If you notice hazard, you don’t need “tips” on how to select away from it: your brain is already hardwired with an action-guiding desire for truth-tracking beliefs. No, these steps remain together; your challenge is “merely” to learn how to raise hazardous patterns to your attention.

Let’s get specific. When I say “raise X to your attention”, what I mean is “when X is perceived, your analytic system (System 2) overrides your autonomic system (System 1) response”. If this does not make sense to you, I’d recommend reading about dual process theory.

How does one encourage a domain-general stance favorable to such overrides? It turns out that there exists an observable personality trait – the need for cognition – which facilitates an increased override rate. Three suggestions that may help:

- Reward yourself when you feel curiosity.

- Inculcate an attitude of distrust when you notice yourself experiencing familiarity.

- Take advantage of your social mirroring circuit by surrounding yourself with others who possess high needs for cognition.

How can you encourage a domain-specific stance favorable to such overrides? In other words: how can you trigger overrides in hazardous conditions, in conditions where obsolescence or epistemic bypassing has occured? So far, two approachs seem promising to me:

- Keep track of areas where you have been learning rapidly. Be more skeptical about deserializing concepts close to this domain.

- Train yourself to be skeptical of memes originating outside of yourself: whenever possible, try to reproduce the underlying logic yourself.

Of course, these suggestions won’t work exceptionally well, for the same reason self-help books aren’t particularly useful. In my language, your mind has a kind of volition resistance that tends to render such mind hacks temporary and/or ineffectual (“people don’t change”). But I’ll leave a discussion for why this might be so, and what can be done, for another day…

Takeaways

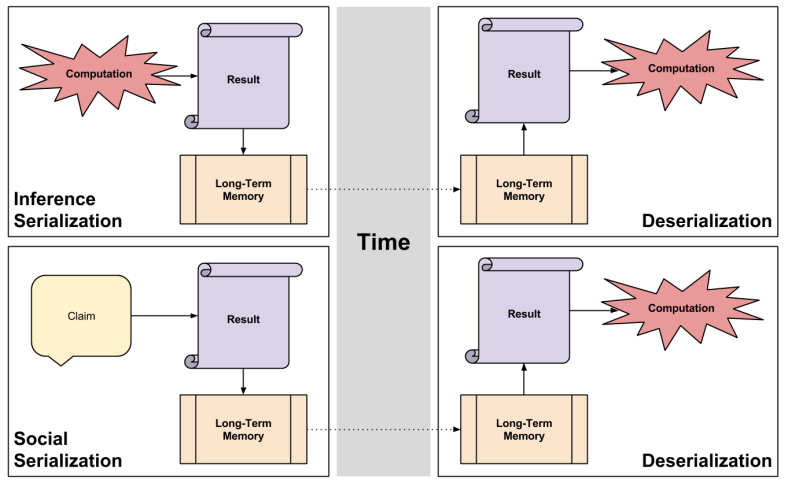

In this post, we explored how the brain recycles concepts in order to save time, via the deserialization technique discussed earlier. Such recycling brings with it two risks:

- Obsolescence: The concepts you resurrect may be inconsistent with your present beliefs.

- Epistemic Bypass: The concepts you resurrect may not have been evaluated at all.

We then identified two ways this mindware might enrich our lives:

- Getting precise about how concepts & conceptiation diverge will give us more control over our mental lives.

- Getting precise about how deserialization complements epistemic overrides will allow us to expand memetic accounts of culture.

Finally, we explored several ways in which we might encourage our minds to override hazardous deserialization patterns.