Content Summary: 2600 words, 26 minute read.

Introduction

There is no secret that the academic field of concepts is in disarray. In this article, Machery attempts to weave these disparate traditions into a compelling whole. But first, a quote which serves to motivate what follows:

Why do cognitive scientists want a theory of concepts? Theories of concepts are meant to explain the properties of our cognitive competences. People categorize the way they do, they draw the inductions they do, and so on, because of the properties of the concepts they have. Thus, providing a good theory of concepts could go a long way towards explaining some important higher cognitive competences.

Summarization text is grayscale, my commentary is in orange.

Article Metadata

- Article: Précis of Doing without Concepts

- Author: Edouard Machery

- Published: 11/2009

- Citations: 178 (note: as of 04/2014)

- Link: Here (note: not a permalink)

Section 1. Regimenting the use of concept in cognitive science

We start with definitions!

The world is not an undifferentiated sea of chaos. It has statistically noticeable patterns – “joints”. Let us call these delightful patterns in nature a category (or a natural kind). But categories are things in the world, and your mind must somehow learn these categories for itself. Plato once described the act of reasoning as: “That of dividing things again by classes, where the natural joints are, and not trying to break any part, after the manner of a bad carver.” (Phaedrus, 265e). This analogy – to carve nature at its joints – is what concept processes do. Concepts represent categories in your brain.

Let’s get specific about the properties of concepts. Machery defines concept as something that:

- Can be about a class, event, substance, or individual.

- Nonproprietary, not constrained by the underlying type of represented information.

- Constitutive elements can vary over time and across individuals.

- Some elements of information about X may not fit into the concept of X; let us call these data background knowledge.

- They are used by Default (I will define this in Section 3).

Section 2. Individuating concepts

Is it possible for an individual to possess different concepts of the same category?

Can Kevin possess two concepts of the category of chair?

Yes.

How do we individuate two related pieces of information, that would otherwise fall under the same concept?

I propose [that] when two elements of information about x, A and B, fulfill either of these [individuation] criteria, they belong to distinct concepts:

- Connection Criterion: If retrieving A (e.g., water is typically transparent) from LTM and using it in a cognitive process (e.g., a categorization process) does not facilitate the retrieval of B (e.g., water is made of molecules of H20) from LTM and its use in some cognitive process, then A and B belong to two distinct concepts (WATER1 and WATER2).

- Coordination Criterion: If A and B yield conflicting judgments (e.g., the judgment that some liquid is water and the judgment that this very liquid is not water) and if I do not view either judgment as defeasible in light of the other judgment (i.e., if I hold both judgments to be equally authoritative), then A and B belong to two distinct concepts (WATER1 and WATER2).

Section 3. Defending the proposed notion of concept

Time to explore our last property of concepts, “used by Default”. Default is a name for “the assumption that some bodies of knowledge are retrieved by default when one is categorizing, reasoning, drawing analogies, and making inductions”. Say you are given a word problem involving counting apples and oranges. Default is the claim that a flood of concepts – including but not limited to arithmetic, the apple, the orange, trees, and fruit – will be drawn from long term memory (LTM) stores, and made available to your mental processes automatically.

At least two research traditions go against this claim:

- Concepts are not retrieved from LTM automatically, they are rather summoned via conscious attention.

- Concepts are drawn from LTM automatically, but they are constructed on-the-fly. When you see an apple, you do not load a concept of apple that was hashed out long ago, your mind queries your LTM for apple-related background knowledge, constructing transient concepts especially tailored for the peculiarities of the task at hand.

Machery makes three counterpoints:

- Only a pronounced amount of recall variability (e.g., highly divergent results for tweaking minor parameters of a word problem) would falsify Default in favor of on-the-fly concept construction.

- Empirical investigations only reveal moderate levels of recall variability.

- A substantial amount of evidence supports Default.

Section 4. Developing a psychological theory of concepts

A psychological theory of concepts must treat the following concerns:

- The nature of the information constitutive of concepts

- The nature of the processes that use concepts

- The nature of the vehicle of concepts

- The brain areas that are involved in possessing concepts

- The processes of concept acquisition

Section 5. Concept in cognitive science and in philosophy

The gist of the section:

Although both philosophers and cognitive scientists use the term concept, they are not talking about the same things. Cognitive scientists are talking about a certain kind of bodies of knowledge, they attempt to explain the properties of our categorization, inductions etc; whereas philosophers are talking about that which allows people to have propositional attitudes. Many controversies between philosophers and psychologists about the nature of concepts are thus vacuous.

An amusing aside that I desire to explicitly ground the definition of vacuous into some theory of concepts, when I come to treat pragmatism.

Anyways, my tentative attempt to restate the above: Philosophers concern themselves with category-concept fidelity, whereas cognitive scientists concern themselves with the lifecycle of the concept within the mental ecosystem.

Section 6. The heterogeneity hypothesis versus the received view

Machery defines the received view as the assumption that, beyond differences within concept subject-matter, concepts share many properties that are scientifically interesting. Machery suggests that this a mistake, and that the evidence suggests the existence of several distinct types of concept. Concept, in other words, is itself not a category (natural kind). A nuanced sentence if you’ve ever heard one. 🙂

The Heterogeneity Hypothesis, in contrast, claims that processes that produce concepts are distinct, that they share little in common.

Section 7. What kind of evidence could support the heterogeneity hypothesis?

Three kinds of evidence are predicted:

- When the conceptualization processes fire individually, we expect each to receive strong confirmation in just those experiments.

- When the conceptualization processes fire together, outputs may be incongruent, requiring mediation; we thus expect processing delays.

- Although the epistemology of dissociations is intricate, we should expect confirmation from neuropsychological data analysis.

Section 8. The fundamental kinds of concepts

Three different kinds of concepts exist in your cognitive architecture:

- Prototypes are bodies of statistical knowledge about a category, a substance, a type of event, and so on. For example, a prototype of dogs could store some statistical knowledge about the properties that are typical of dogs and/or the properties that are diagnostic of the class of dogs… Prototype are typically assumed to be used in cognitive processes that compute similarity linearly.

- Exemplars are bodies of knowledge about individual members of a category (e.g., Fido, Rover), particular samples of a substance, and particular instances of a kind of event (e.g., my last visit to the dentist). Exemplars are typically assumed to be used in cognitive processes that compute the similarity nonlinearly.

- Theories are bodies of causal, functional, generic, and nomological knowledge about categories, substances, types of events, etc. A theory of dogs would consist of some such knowledge about dogs. Theories are typically assumed to be used in cognitive processes that engage in causal reasoning.

Some phenomena are well explained if the concepts elicited by some experimental tasks are prototypes; some phenomena are well explained if the concepts elicited by other experimental tasks are exemplar; and yet other phenomena are well explained if the concepts elicited by yet other experimental tasks are theories. As already noted, if one assumes that experimental conditions prime the reliance on one type of concept (e.g., prototypes) instead of other types (e.g., exemplars and theories), this provides evidence for the heterogeneity hypothesis.

Let’s illustrate this situation with the work on categorical induction – the capacity to conclude that the members of a category possess a property from the fact that the members of another category possess it and to evaluate the probability of this generalization… the fact that different properties of our inductive competence are best explained by theories positing different theoretical entities constitutes evidence for the existence of distinct kinds of concepts used in distinct processes. Strikingly, this conclusion is consistent with the emerging consensus among psychologists that people rely on several distinct induction processes.

These arguments seems quite powerful at first glance. Even after reviewing peer-reviewed criticisms, its strength does not feel much diminished. Pending my own research into the forest of citations embedded within this section, I will proceed with my theorizing as though the Heterogeneity Hypothesis is true.

Section 9. Neo-empiricism

In contrast, neo-empiricism can be summarized with the following two theses:

- The knowledge that is stored in a concept is encoded in several perceptual and motor representational formats.

- Conceptual processing involves essentially re-enacting some perceptual and motor states and manipulating those states.

Amidst broader empirical concerns, Machery outlines three problems for the neo-empiricist school:

- Anderson’s problem: many competing versions of amodal concept theories exist, and neo-empiricists tend to assert victory over weaker versions of amodal theorizing.

- Imagery problem: it is hard to affirm that imagery is the only type of processes people have; people seem to have amodal concepts that are used in non-perceptual processes.

- Generality problem: some concepts (magnitude of classes, tonal sequences) have been empirically shown to be amodal, but neo-empiricists are bound to assume that all concepts are perceptual.

However, despite these concerns, Machery is happy to concede that there may “be something to” neo-empiricist arguments. In which case a fourth, a perceptual process would be added to the hypothesis. But the author suggests that, at this time, there is simply not enough evidence to justify this fourth concept-engine.

Machery seems not to appreciate an obvious implication here. Recall that all concepts are “conceived” and “reared” under perceptual supervision. What is there to prevent a daisy-chaining effect, whereby concepts are recalled which drag with them perceptual reconstructions, which permit new conceptual manipulations, etc. This information pathway could explain phenomena such as Serial Associative Cognition, a Stanovitchian term. One weakness of Machery is that he does not draw enough constraints from the broader decision-making literature; Serial Associative Cognition must be explained in the language of concepts just as much as Similarity Judgments.

Speaking generally, the manner in which percepts influence concept modification is severely under-explored. The exact same percept of a dog could be the first draft of an exemplar-concept (e.g., an infant), could subliminally modify a prototype-concept (e.g., an adult), or could explicitly falsify a theory-concept (e.g., a veterinarian). In the final analysis, it strikes me as unlikely that a perceptual concept-constructor module would simply be a cousin to the other three. I would expect neo-empiricist arguments to ultimately be housed in some larger framework, with a more complete description of perceptual processing.

Section 10. Hybrid theories of concepts.

Hybrid theories of concepts grant the existence of several types of bodies of knowledge, but deny that these form distinct concepts; rather, these bodies of knowledge are the parts of concepts. Some hybrid theories have proposed that one part of a concept of x might store some statistical information about the x’s, while another part stores some information about specific members of the class of x’s, and a third part some causal, nomological, or functional information about the x’s…. [but] evidence tentatively suggests that prototypes, set of exemplars, and theories are not coordinated [in this way].

Section 11. Multi-process theories

While Machery is quick to cede that the evidence for many cognitive processes is incontrovertible, he retorts that dual-process theories traditionally fail to answer the following two issues:

- In what conditions are the cognitive processes underlying a given [module] triggered?

- If the cognitive processes are [simultaneously] triggered, how does the mind [coordinate] their outputs?

A legitimate criticism of dual-process theories.

What is known [regarding concepts and dual-process theories] can be presented briefly. It appears that the categorization processes can be triggered simultaneously, but that some circumstances prime reliance on one of the categorization processes. Reasoning out loud seems to prime people to rely on a theory-based process of categorization. Categorizing objects into a class with which one has little acquaintance seems to prime people to rely on exemplars. The same is true of these classes whose members appear to share few properties in common. Very little is known about the induction processes except for the fact that expertise seems to prime people to rely on theoretical knowledge about the classes involved.

This is irrelevant to dual-process theory… dual-process theory is concerned with how some mental processes become conscious, decontextualized, slow, and effortful, etc. The above quote is instead an unrelated (albeit interesting) glimpse at how the different conceptualization modules may interact.

Section 12. Open questions

Machery identifies three directions for future inquiry:

- There are several prototype theories, several exemplar theories, and several theory theories. It remains unclear which theory [of each type] is correct. Too little attention has been given to investigating the nature of prototypes, exemplars, and theories.

- The factors that determine whether an element of knowledge about x is part of the concept of x rather than being part of the background knowledge about x.

- How conceptualization may cohere with dual-process theories.

Dual-process theory is actually more expansive than Machery allows. The concept of Default, defined in section 3, is a System1 behavior. Thus, the questions of Default vs. Manual Override, Concept vs. Background Knowledge… these swiftly become absorbed into the need for dual-process theorizing…

Section 13. Concept eliminativism

Machery finally advances tentative philosophical and sociological reasons one might banish concept from our professional vocabulary.

Theoretical terms are often rejected when it is found that they fail to pick out natural kinds. To illustrate, some philosophers have proposed to eliminate the term emotion from the theoretical vocabulary of psychology on these grounds. The proposal here is that concept should be eliminated from the vocabulary of cognitive science for the same reason.

The continued use of concept in cognitive science might invite cognitive scientists to look for commonalities… if the heterogeneity hypothesis is correct, these efforts would be wasted. By contrast, replacing concept with prototype, exemplar, and theory would bring to the fore urgent open questions.

Interesting suggestions. However, I think it is clear more theoretical weight lies in Machery’s heterogeneity hypothesis.

Concluding Thoughts

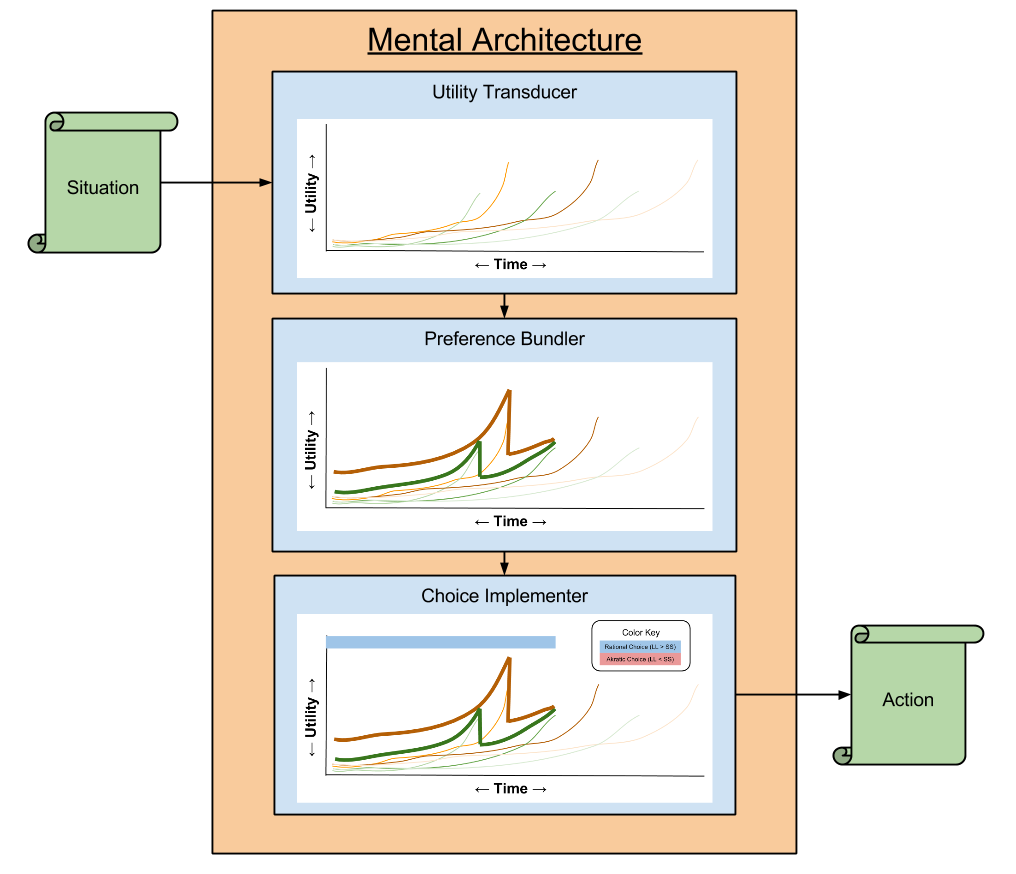

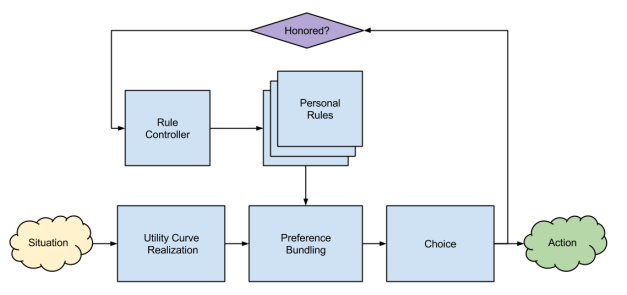

Three different kinds of concepts must imply three different kinds of conceptualization modules.

Novel prediction: damage to any one of these modules must inhibit only one of kind of conceptualization.

Much, much more work is needed…

One counterargument made in the responses to this Précis caught my eye. David Danks of CMU argues that all three conceptualization modules can be modeled as special cases of a singular graphical model representation. His paper, Theory Unification and Graphical Models in Human Categorization (2007), serves to this effect. Machery’s reply to this counterpoint is brief, pointing to its disconnect to biological evidence, although Machery elsewhere allows that causal models might underlie concept-theory construction (c.f., A Theory of Causal Learning in Children: Causal Maps and Bayes Nets (2004)).

I will close with a quote made by Couchman et. al, in a response to this Précis:

Our task is to carve nature at its joints using the psychological knife called concepts. It is true, it is profoundly important to know, and it is all right for the progress of science that the knife is Swiss-Army issue with multiple blades.