Part Of: Breakdown of Will sequence

Followup To: Willpower As Preference Bundling

Content Summary: 900 words, 9 min reading time

Context

When “in the moment”, humans are susceptible to bad choices. Last time, we introduced willpower as a powerful solution to such akrasia. More specifically:

- Willpower is nothing more, and nothing less, than preference bundling.

- Inasmuch as your brain can sustain preference bundling, it has the potential to redeem its fits of akrasia.

But this only explained how preference bundling works at the level of utility curves. Today, we will learn how preference bundling is mentally implemented, and this mental model will in turn provide us with predictive power.

Building Mental Models

Time to construct a model! 🙂 You ready?!

In our last post, we discussed three distinct phases that occur during preference bundling. We can then imagine three separate modules (think: software programs) that implement these phases.

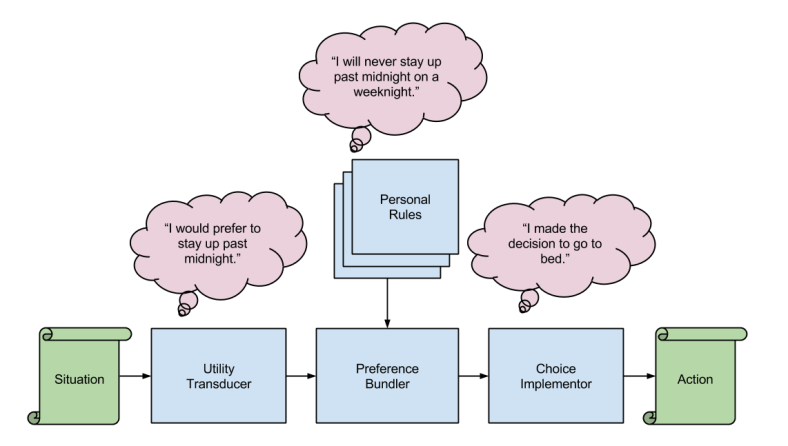

This diagram provides a high-level, functional account of how our minds make decisions. The three modules can be summarized as follows:

- The Utility Transducer module is responsible for identifying affordances within sensory phenomena, and compressing a multi-dimensional description into a one-dimensional value.

- The Preference Bundler module can aggregate utility representations that are sufficiently similar. Such a technique is useful for combating akrasia.

- The Choice Implementer module selects Choice1 if Preference1 > Preference2. It is also responsible for computing when and how to execute a preference-selection.

The above diagram is, of course, merely a germinating seed of a more precise mental architecture (it turns out that mind-space is rather complex 🙂 ). Let us now refine our account of the Preference Bundler.

Personal Rules

Consider what it means for a brain to implement preference bundling. Your brain must receive utility-anticipated information from an arbitrary number of choice valuations, and aggregate similar decisions into a single measure.

Obviously, the mathematics of such a computation lies underneath your awareness (your superpower is math). However, does the process entirely fail to register in the small room of consciousness?

This seems unlikely, given the common phenomenal experience of personal rules. Is it not likely that the conscious experience of “I will never stay up past midnight on a weeknight” does not in some way correlate with the actions of the Preference Bundler?

Let’s generalize this question a bit. In the context of personal rules, we are inquiring about the meaning of quale-module links. This type of question is relevant in many other contexts as well. It seems to me that such links can be roughly modeled in the vocabulary of dual-process theory, where System 1 (parallel modules) data bubbles up into System 2 (sequential introspection) experience.

Let us now assume that the quale of personal rules correlates to some variety of mental substance. What would that substance have to include?

In terms of complexity analysis, it seems to me that a Preference Bundler need not generate relevant rules on the fly. Instead, it could more efficiently rely on a form of rule database, which tracks a set of rules proven useful in the past. Our mental architecture, then, looks something like this (quales are in pink):

In his book, Ainslee presents intruging connections between this idea of a rule database with similar notions in the history of ideas:

The bundling phenomenon implies that you will serve your long-range interest if you obey a personal rule to behave alike towards all members of a category. This is the equivalent of Kant’s categorical imperative, and echoes the psychologist Lawrence Kohlberg’s sixth and highest principle of moral reasoning, deciding according to principle. It also explained how people with fundamentally hyperbolic discount curves may sometimes learn to choose as if their curves were exponential.

Recursive Feedback Loops

Personal rules, of course, are not spontaneously appear within your mind. They are constructed by cognitive processes. Let us again expand our model to capture this nuance:

Describing our new components:

- The Rule Controller module is responsible both for generating new rules (e.g., “I will not stay up past midnight on a weeknight”), and re-factoring existing ones.

- The “Honored?” checkpoint conveys information on how well a given personal rule was followed. The Rule Controller module may use this information to update the rule database.

A feedback loop exists in our mental model. Observe:

Feedback loops can explain a host of strange behavior. Ainslie describes the torment of a dieter:

Even if [a food-conscious person] figures, from the perspective of distance, that dieting is better, her long-range perspective will be useless to her unless she can avoid making too many rationalizations. Her diet will succeed only insofar as she thinks that each act of compliance will be both necessary and effective – that is, that she can’t get away with cheating, and that her current compliance will give her enough reason not to cheat subsequently. The more she is doubtful of success, the more likely it will be that a single violation will make her lose this expectation and wreck her diet. Personal rules are a recursive mechanism; they continually take their own pulse, and if they feel it falter, that very fact will cause further faltering.

Takeaways

And that’s a wrap! 🙂 I am hoping to walk away from this article with two concepts firmly installed:

- Preference bundling is mentally implemented via a database of personal rules (“I will do X in situations that involve Y).

- Personal rules constitute a feedback loop, whereby rule-compliance strengthen (and rule-circumvention weakens) the circuit.

Next Up: [Iterated Schizophrenic’s Dilemma]