Part Of: Sociality sequence

Followup To: Agent Detection: Life Recognizing Itself

Content Summary: 1500 words, 15 min read

David Hume once observed:

There is an universal tendency among mankind to conceive all beings like themselves, and to transfer to every object those qualities of which they are intimately conscious. We find faces in the moon, armies in the clouds; and, by a natural propensity, if not corrected by experience and reflection, ascribe malice or goodwill to every thing that hurts or pleases us … trees, mountains and streams are personified, and the inanimate parts of nature acquire sentiment and passion.

How did we acquire the ability to discover minds in the world? Let’s find out.

Equifinality: Awakening To Goals

Obviously enough, living things obey the laws of physics. But living things (which we called agents) also have properties unique to them: characteristic appearances (e.g., faces) and behaviors (e.g., self-propelled movement). In Life Recognizing Itself we saw how this allows the brain to build better prediction machines around these agents. We saw that differentiating agents does not require representing other minds. This fact allows us to appreciate the gradual maturation of the Agency Detection system as we travel across the Tree of Life.

Once an organism is detected, the Agent Classifier strains additional information out of its perceptual signature. But there are many other improvements we can make to our prediction machines. Consider, for example, the perspective of an infant who every night is picked up and placed in her crib.

On some nights she may be crawling around the kitchen, in others the family room, in others sitting in her parent’s lap on the couch. Despite these very diverse beginnings, the outcome of the putting-to-bed process is always the same. From the perspective of the neurons in her eyes, very different beginning states always result in the same end state. This property is known as equifinality, and it is not unique to nursery rooms: it is ubiquitous among living things (another example: the behavior of water buffalo around watering holes).

How might prediction machines anticipate equifinality? An efficient explanation of equifinality comes from ascribing goals to an agent.

Dominance Hierarchies: Awakening To Belief

Minds capable of computing situation-agnostic equifinality already have an advantage: perhaps the lion just ate and is not hungry enough to give chase, but the gazelle is just as well modeling the lion as universally hungry. But there is room to inject a little contingency. Return back to our nursery example: the infant knows that putting-to-bed desire is only activated at night. How best to anticipate contingent desire? An efficient explanation for equifinality contingency is ascribing beliefs to an agent.

In fact, there are myriad benefits towards possessing belief inference systems besides equifinal contingency. Another important reward gradient stems from most important social structure of the animal kingdom: the dominance hierarchy. Wolf packs, for example, feature an alpha male, who is given special feeding & reproductive privileges. In species like the baboon, this hierarchy becomes much more detailed: every individual male knows their who is above & who is below their place.

Dominance hierarchies can arguably exist without its members having theories of mind. But considering that agility in climbing the hierarchy is under strong selective pressure, and that effective navigation requires tremendous psychological prowess. For example, in his book A Primate’s Memoir, Robert Sapolsky recounts tales of his baboons forming alliances in their attempts to dethrone the sitting alpha. The ability to model the beliefs and desires of one’s alliance partner is surely a boon; hence discovering mind can be viewed as a selective consequence of the dominance hierarchy.

Intentional Stance

This tendency to ascribe beliefs and desires onto other agents together known as the intentional stance. Let us name the module responsible for this ability the Agent Mentalizer. The intentional stance appears in children between 6 and 12 months (Gergely et al, 1994).

As we might expect from any algorithm in the Agency Detection system, we might expect the intentional stance to be quite vulnerable to false positives. And that is exactly what we find. For an extreme demonstration of this, consider the following video (taken from Heider & Simmel. (1944)):

While the Agent Mentalizer was designed to understand the minds of other animals, it had no trouble ascribing beliefs and goals to two dimensional shapes. This is roughly analogous to your email provider accepting a tennis ball as a login password.

Impression Management Via Secondary Models

In your brain, you have a set of beliefs about the world. These beliefs may be stored in many different memory systems, but all of them together improve the power of prediction that you wield over your environment. Your knowledge, your web of belief, I call a World Model. For every significant individual in your life, you have a collection of beliefs about them, provided by your relationship modeling system. Some of these beliefs are non-mental, obtained from systems like the Agent Classifier. Call these beliefs collectively your primary model. For every significant individual in your life, you have a primary model for them; your World Model contains many primary models.

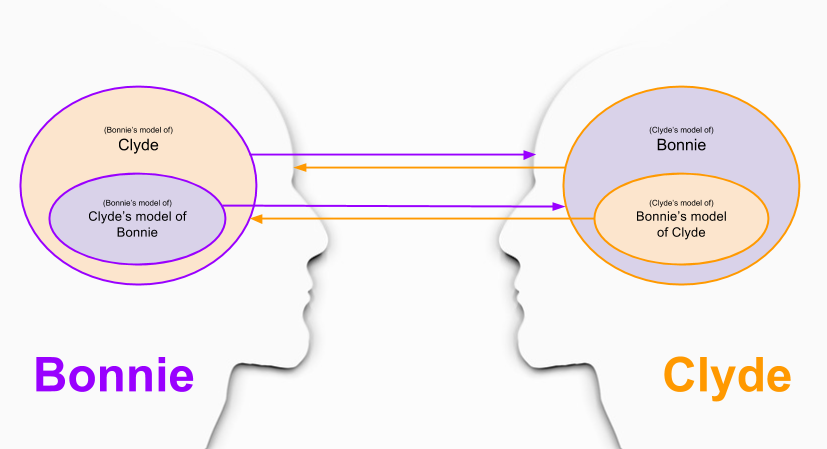

However, the Agent Mentalizer evolved to infer mental states (beliefs, goals) of other individuals as well. But simulating mental states is not like simulating objects – mental states are about objects. That is, we require a new type of model – a secondary model – to simulate the primary model of another person.

Confused? An example should help.

Imagine a three year old child, Bonnie, and her best friend Clyde. Since she was very young, Bonnie has been accumulating shared experiences with Clyde, and is now able to recognize him by appearance. These memories and knowledge of Clyde are stored in a primary model.

At the twelve month mark, Bonnie acquired the ability to simulate beliefs/goals of other people. This awakening has greatly improved her understanding of Clyde. She began noticing when Clyde was not in the mood to play, and his opinions of various toys. She even became able to crudely simulate Clyde’s response to situation X, even if Clyde hadn’t encountered X in real life.

During this time, of course, Clyde went through the very same maturation process. We can model their co-understanding as follows:

The above graphic underscores the “egotistical” subset of secondary models: simulation of the impression they are making on one another. The intentional stance is the birthplace of impression management.

Against Ternary Models

If secondary models simulate primary models, can ternary models simulate secondary models? Well, let’s not close ourselves to the possibility. Here’s what such a thing looks like:

So… ternary models are hard to understand! Let’s simplify:

- A’s model of B = How B Appears To A

- B’s model of A’s model of B = How B thinks B appears to A

Make sure the construction logic of the above image is clear: you should have no trouble constructing a quaternary graphic, quinary graphic, etc. Doesn’t the raw ability to reason about such higher-order logics mean that your brain is capable of infinitely-nested meta-models?

A useful analogy here is the natural numbers (0, 1, 2, …). How can your brain hope to count arbitrarily large numbers of things? After all, folkmathematics is fueled with biochemistry; the number of representations it can store is finite. So… how many numbers can it count?

The correct answer is four. That is, your subitizing module renders your ability to count up to four is almost instantaneous; for larger numbers, response times rise dramatically, with an extra 250-350 ms added for each additional item beyond about four items. We see a similar time difference in recursive reasoning: most people are easily able to simulate of other people (primary modeling) and conduct impression management (secondary modeling). But imagining the impression-management of other people takes conscious effort; and thus cannot be part of your default Theory Of Mind machinery.

With these insights in mind, we see that ternary models out not be admitted into our mental architecture simply because they are conceivable. Such a solution would not be parsimonous since these complex behaviors can be built from these atomic components. In fact, in the simplification above, you can even see hints of your two-level brain “translating” ternary concepts into a more direct language that it better understands.

Takeaways

Here are the ideas I want you to walk away from this post:

- Agents are able to steer different situations towards one outcome. Seeing a world of desire, a world of goals, is how children explain equifinality.

- Social animals typically compete for resources in the shadow of a dominance hierarchy. Modeling the beliefs of their peers about them improves social acumen, and with it, fitness.

- Ascribing beliefs and desires to other agents is known as the intentional stance. It appears very early in humans, between six and twelve months.

- We can formalize the above notions in representation theory. Thinking about other people reside in primary models, thinking about other people’s thoughts go in secondary models.

- Ternary models are unlikely to exist for the same reason that computers don’t feel inclined to count to infinity. Our brains can get there by recursively invoking more simple components.

Relevant Resources

- Gergely et al (1994) Taking the intentional stance at 12 months of age

- Heider & Simmel. (1944) An experimental study of apparent behavior

- Trick, L.M., & Pylyshyn, Z.W. (1994). Why are small and large numbers enumerated differently?