Part Of: Principles of Machine Learning sequence

Followup To: Five Tribes of Machine Learning

Content Summary: 1700 words, 17 min read

Overview

In Five Tribes of Machine Learning, I reviewed Pedro Domingos’ account of tribes within machine learning. These were the Symbolists, Connectionists, Bayesians, Evolutionaries, and Analogizers. Domingos thinks the future of machine learning lies in unifying these five tribes into a single algorithm. This master algorithm would weld together the different focal points of the various tribes (c.f. the parable of the blind men and the elephant).

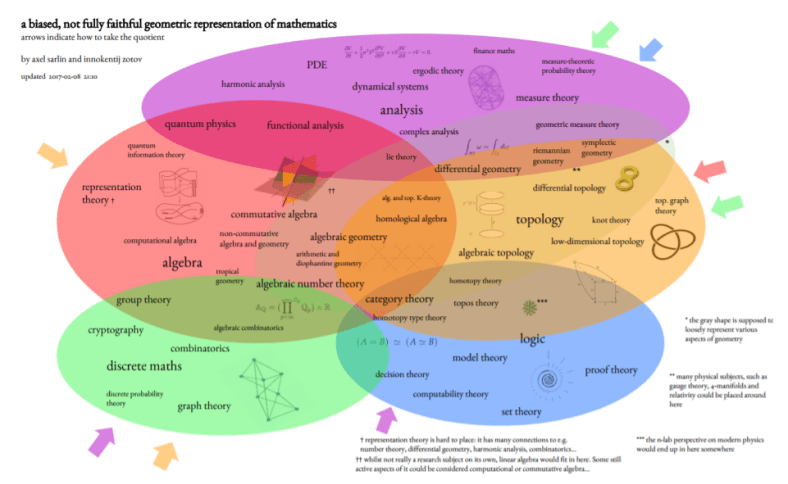

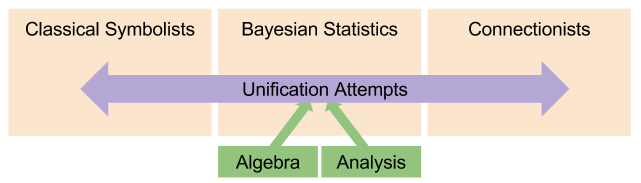

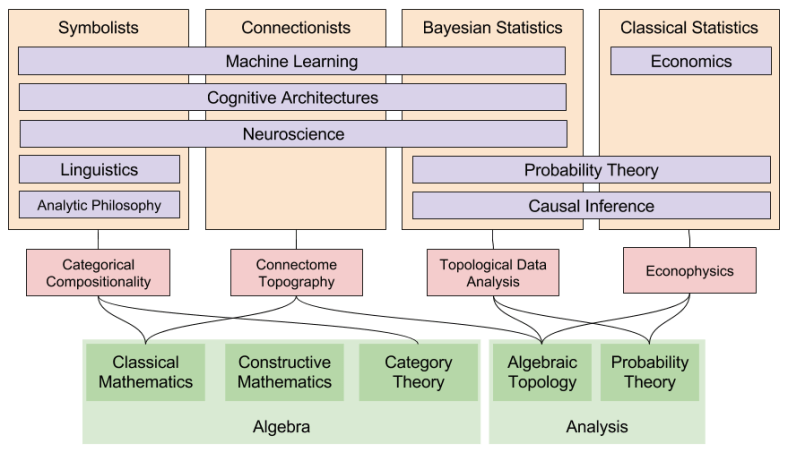

Today, I will argue that Domingos’ goal is worthy, but his approach too confined. Integrating theories of learning surely constitutes a constructive line of inquiry. But direct attempts to unify the tribes (e.g., Markov logic) are inadequate. Instead, we need to turn our gaze towards pure mathematics: the bedrock of machine learning theory. Just as there are tribes within machine learning, mathematical research has its own tribes (image credit Axel Sarlin):

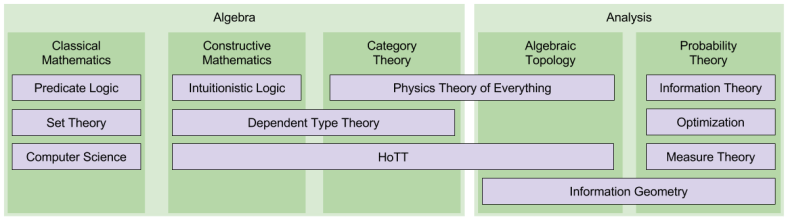

The tribes described by Domingos draw from the math of the 1950s. Attempting mergers based on these antiquated foundations is foolhardy. Instead, I will argue that updating towards modern foundational mathematics is a more productive way to pursue the master algorithm. Specifically, I submit that machine learning tribes should strive to incorporate constructive mathematics, category theory, and algebraic topology.

Classical Foundations

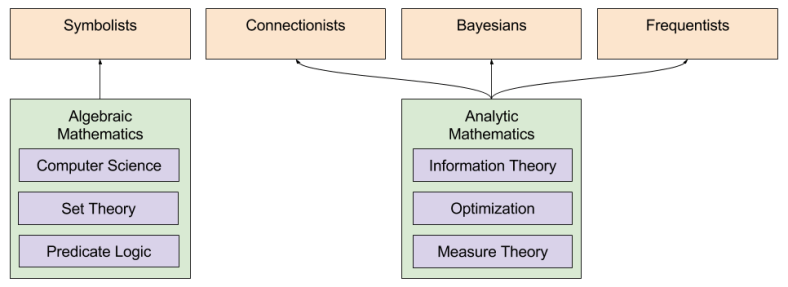

Domingos argues for five machine learning tribes. I argue for four. I agree that his Symbolists, Connectionists , and Bayesians are worthy of attention. But I will not consider his Evolutionaries and Analogizers: these tribes have been much less conceptually coherent, and also less influential. Finally, I submit Frequentists as a fourth tribe. While this discipline tend to self-identify as “predictive statistics” instead of “machine learning”, their technology is sufficiently similar to merit consideration.

The mathematical foundations of the Symbolists rests on predicate logic, invented by Gottlieb Frege and C.S. Peirce. This calculus in turn forms the roots of set theory, invented by Georg Cantor and elaborated by Bertrand Russell. Note that 3 out of 4 of these names come from analytic philosophy. Alan Turing’s invention of his eponymous machine marked the birthplace of computer science. The twin pillars of computer science are computability theory and complexity theory, which in turn both rest on top of set theory. Finally, algorithm design connects with the mathematical discipline of combinatorics.

The foundation of the Statisticians (both Bayesian and Frequentist) is measure theory (which, coincidentally, borrows from set theory). The field of information theory gave probability distributions the concept of uncertainty: see entropy as belief uncertainty. Finally, formal theories of learning draw heavily from optimization: where model parameters are tuned to optimize against miscellaneous objective functions.

Mathematical research can largely be decomposed into two flavors: algebraic and analytical. Algebra focuses on mathematical objects and structures: group theory, for example, falls under its umbrella. Analysis alternatively focuses on continuity, and includes fields like measure theory and calculus. Notice that the mathematical foundations of the Symbolists is fundamentally algebraic; whereas that of the Statisticians are analytic. This gets at the root of why machine learning tribes often have difficulty communicating with one another.

Classical Applications

We have already noted that that Symbolists, Connectionists, and Bayesians have all created applications in machine learning (decision trees, neural networks and graphical models, respectively). These tribes are also expressed in neuroscience (language of thought, Hebbian learning, and Bayesian Brain, respectively). They have also all developed their own flavors of cognitive architectures (e.g., production rule systems, attractor networks, and predictive coding respectively).

Frequentist Statisticians have no real presence in machine learning, neuroscience, nor cognitive architecture. But they are the only dominant force in the social sciences; e.g., econometrics.

I should also note that, in addition to the fields already noted Symbolists have unique presence in linguistics (especially Chomskyian universal grammar) and analytic philosophy (c.f., that field’s heavy reliance on predicate logic, and the linguistic turn in the early twentieth century).

Finally, causal inference only exists in the Bayesian (Pearlean d-separation) and Frequentist (Rubin potential outcome models). To my knowledge, this technology has not yet been robustly integrated into the Symbolist nor Connectionist tribes to date.

These four tribes largely draw from early twentieth century mathematics. Let us now turn to what mathematicians have been up to, in the past century.

Towards New Foundations

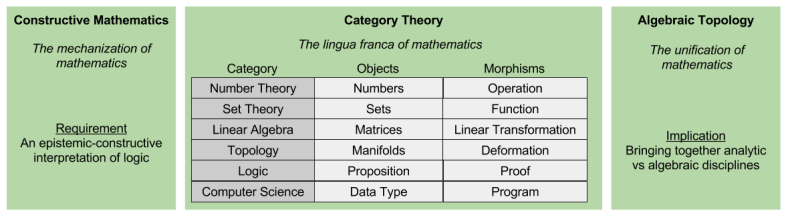

Let me now introduce you to the three developments in modern mathematics: constructive mathematics, category theory, and algebraic topology.

In classical logic, truth is interpreted ontologically: a fact about the world. But truth can also be interpreted epistemically: a true proposition is one that we can prove. But epistemic logic (aka intuitionistic logic) has us reject the Law of Excluded Middle (LEM): failing to prove a theorem is not the same thing as disproving it.

By removing LEM from mathematics, proof-by-contradiction become impossible. While this may seem limiting, in fact it also opens the doors for constructive mathematics: mathematics that can be input, and verified, by a computer. Erdos’ Book of God will be supplanted by the Github of God.

In recent years, category theory has emerged as the lingua franca of theoretical mathematics. It is built on the observation that all mathematical disciplines (algebraic and analytic) fundamentally describe mathematical objects and their relationships. Importantly, category theory allows theorems proved in one category to be translated into entirely novel disciplines.

Finally, since Alexander Grothendieck’s work on sheaf and topos theory, algebraic topology (and algebraic geometry) have come to occupy an increasingly central role in mathematics. This trend has only intensified in the 21st century. As John Baez puts it,

These are just the first steps in the ‘homotopification’ of mathematics, a trend in which algebra more and more comes to resemble topology, and ultimately abstract ‘spaces’ (for example, homotopy types) are considered as fundamental as sets.

These three “pillars” are perhaps best motivated by the technology that rests on it.

Computational trinitarianism is built on deep symmetries between proof theory, type theory and category theory. The movement is encapsulated in the slogan “Proofs are Programs” and “Propositions are Types”. This realization led to the development of Martin-Lof dependent type theory, which in turn has led to theorem proving software packages such as Coq.

In metamathematics, researchers investigate whether a single formal language can form the basis of the rest of mathematics. Historically, three candidates have been Zermelo-Frankel (ZF) set theory, and more recently Elementary Theory of the Category of Sets (ETCS). Homotopy type theory (HoTT) is a new entry into the arena, and extends computational trinitarianism by the Univalence Axiom, an entirely new interpretation of logical equality. Under the hood, the univalence axiom relies on a topological interpretation of the equality type. Suffice it to say, this particular theory has recently inspired a torrent of novel research. Time will tell how things develop.

In thermodynamics is built on the idea of Gibbs entropy (or, more formally, free energy). The basic intuition, which stems from statistical physics, is that disorder tends to increase over time. And thermodynamics does appear to be relevant in a truly diverse set of physical phenomena.

- In physics, entropy is the reason behind the arrow of time (its “forward directionality”)

- In chemistry, entropy forms the basis for spontaneous (asymmetric) reactions

- In paleoclimatology, there is increasing reason to think that abiogenesis occurred via a thermodynamic process.

- In anatomy, entropy is the organizing principle underlying cellular metabolism.

- In ecology, entropy explains emergent phenomena related to biodiversity.

If I were to point at one candidate for the Universal Algorithm, entropy minimization would be my first pick. It turns out, strangely enough, that thermodynamic (Gibbs) entropy has the same functional form as information-theoretic (Shannon) entropy, which measures uncertainty in probability distributions. This is no accident. Information geometry extends this notion of “thermodynamic information” by interpreting entropy-distributions as stochastic manifolds.

In physics, of course, the two dominant theories of nature (general relativity + QFT) are mutually incompatible. It is increasingly becoming apparent that quantum topology is most viable way to achieve a Grand Unified Theory. From this paper,

Feynman diagrams are used to reason about quantum processes. In the 1980s, it became clear that underlying these diagrams is a powerful analogy between quantum physics and topology. Namely, a linear operator behaves very much like a ‘cobordism’: a manifold representing spacetime, going between two manifolds representing space. This led to a burst of work on topological quantum field theory and ‘quantum topology’

Searching For Unity

That was a lot of content. Let’s zoom out. What is the point of being introduced to these new foundations? To give an more detailed intuition on which ML research is worthy of your attention (and participation!).

Most attempts to unify machine learning draw from merely classical foundations. For example, consider fuzzy logic, Markov logic networks, Dempster-Shafer theory, and Bayesian Neural Networks. While these ideas may be worth learning (particularly the last two), as candidates for unification they are necessarily incomplete; doomed by their unimaginative foundations.

In contrast, I submit you should funnel more enthusiasm towards ideas that draw from our new foundations. These may be active research concepts.

- In linguistics, categorical compositionality is the marriage of category theory and traditional syntax. It blends nicely with probabilistic approaches of meaning (e.g., word2vec). See this 2015 paper, for example.

- In statistics, topological data analysis is a rapidly expanding discipline. Rather than limiting oneself to probabilistic distribution theory (exponential families), this approach to statistics incorporates structural notions from algebraic topology. See this introductory tutorial, for example.

- In neuroscience, the most recent Blue Brain experiment suggests that the Hebbian-style learning is not the whole story. Instead, the brain seems to rely on connectome topography: dynamically summon and disperse cliques of neurons, whose cooperation subsequently disappears like a tower of sand.

- In macroeconomics, neoclassical models (based on partial differential equations) are being challenged by a new kind of model, econophysics, which views the market as a kind of heat machine.

Or they may be entirely unexplored questions that dawn on you by contemplating conceptual lacunae.

- What would happen if I were to re-imagine probability theory from intuitionistic principles?

- How might I formalize production rule cognitive architectures like ACT-R in category theory?

- Is there a way to understand neural network behavior and the information bottleneck from a topological perspective?

Until next time.

Reblogged this on Autrement qu'être Mathesis uni∜ersalis Problema Universale Heidegger/Husserl être/conscience : plan vital-ontologique vs plan spirituel d'immanence CLAVIS UNIVERSALIS HENOSOPHIA PANSOPHIA ενοσοφια μαθεσις.

LikeLike