Part Of: Machine Learning sequence

Content Summary: 900 words, 9 min read

ML is tribal, not monolithic

Research in artificial intelligence (AI) and machine learning (ML) has been going on for decades. Indeed, the textbook Artificial Intelligence: A Modern Approach reveals a dizzying variety of learning algorithms and inference schemes. How can we make sense of all the technologies on offer?

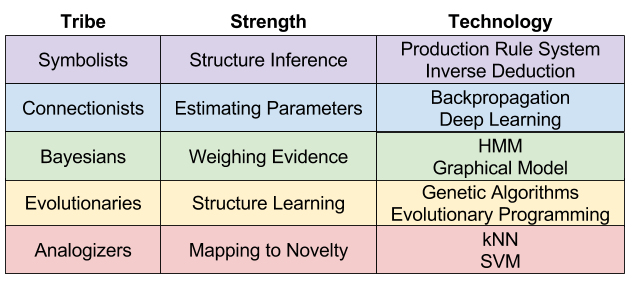

As argued in Domingos’ book The Master Algorithm, the discipline is not monolithic. Instead, five tribes have progressed relatively independently. What are these tribes?

- Symbolists use formal systems. They are influenced by computer science, linguistics, and analytic philosophy.

- Connectionists use neural networks. They are influenced by neuroscience.

- Bayesians use probabilistic inference. They are influenced by statistics.

- Evolutionaries are interested in evolving structure. They are influenced by biology.

- Analogizers are interested in mapping to new situations. They are influenced by psychology.

Expert readers may better recognize these tribes by their signature technologies:

- Symbolists use decision trees, production rule systems, and inductive logic programming.

- Connectionists rely on deep learning technologies, including RNN, CNN, and deep reinforcement learning.

- Bayesians use Hidden Markov Models, graphical models, and causal inference.

- Evolutionaries use genetic algorithms, evolutionary programming, and evolutionary game theory.

- Analogizers use k-nearest neighbor, and support vector machines.

In fact, my blog can be meaningfully organized under this research landscape.

- I explore Symbolist research in my Logic and Algebra sequences.

- I explore Connectionist research in my Reinforcement Learning sequence.

- I explore Bayesian research in my Probability Theory, Bayesian Statistics, Graphical Models, and Causal Inference sequences.

- I explore Evolutionary research in Game Theory sequence.

- I have not yet explored Analogizer research.

History of Influence

Here are some historical highlights in the development of artificial intelligence.

Symbolist highlights:

- 1950: Alan Turing proposes the Turing Test in Computing Machinery & Intelligence.

- 1974-80: Frame problem & combinatorial explosion caused First AI Winter.

- 1980: Expert systems & production rules re-animate the field.

- 1987-93: Expert systems too fragile & expensive, causing the Second AI Winter.

- 1997: Deep Blue defeated reigning chess world champion Gary Kasparov.

Connectionist highlights:

- 1957: Perceptron invented by Frank Rosenblatt.

- 1968: Minsky and Papert publish the book Perceptrons, criticizing single-layer perceptrons. This puts the entire field to sleep, until..

- 1986: Backpropagation invented, and connectionist research restarts.

- 2006: Hinton et al publish A fast learning algorithm for deep belief nets, which rejuvinates interest in Deep Learning.

- 2017: AlphaGo defeats reigning Go world champion, using DRL.

Bayesian highlights:

- 1953: Monte Carlo Markov Chain (MCMC) invented. Bayesian inference finally becomes tractable on real problems.

- 1968: Hidden Markov Model (HMM) invented.

- 1988: Judea Pearl authors Probabilistic Reasoning in Intelligent Systems, and creates the discipline of probabilistic graphical models (PGMs).

- 2000: Judea Pearl authors Causality: Models, Reasoning, and Inference, and creates the discipline of causal inference on PGMs.

Evolutionary highlights

- 1975: Holland invents genetic algorithms.

Analogizer highlights

- 1968: k-nearest neighbor algorithm increases in popularity.

- 1979: Douglas Hofstadter publishes Godel, Escher, Bach.

- 1992: support vector machines (SVMs) invented.

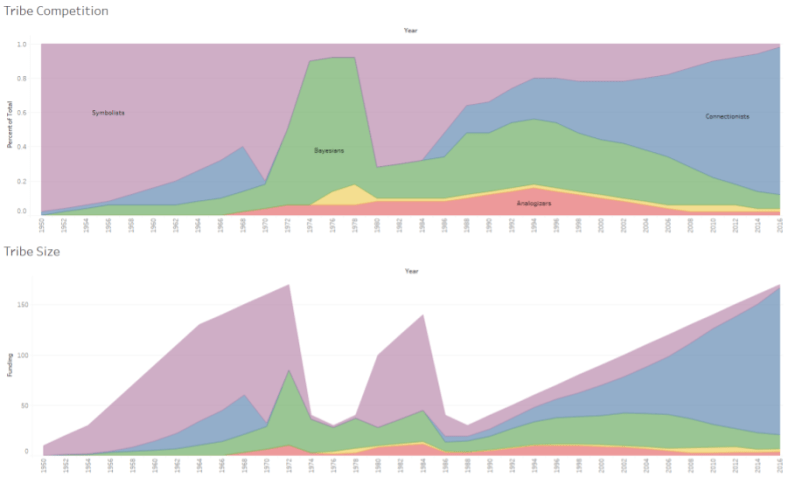

We can summarize this information visually, by creating an AI version of the Histomap:

These data are my own impression of AI history. It would be interesting to replace it with real funding & paper volume data.

Efforts Towards Unification

Will there be more or fewer tribes, twenty years from now? And which sociological outcome is best for AI research overall?

Theory pluralism and cognitive diversity are underappreciated assets to the sciences. But scientific progress is often heralded by unification. Unification comes in two flavors:

- Reduction: identifying isomorphisms between competing languages,

- Generalization: creating a supertheory that yields antecedents as special cases.

Perhaps AI progress will mirror revolutions in physics, like when Maxwell unified theories of electricity and magnetism.

Symbolists, Connectionists, and Bayesians suffer from a lack of stability, generality, and creativity, respectively. But one tribe’s weakness is another tribe’s strength. This is a big reason why unification seem worthwhile.

What’s more, our tribes possesses “killer apps” that other tribes would benefit from. For example, only Bayesians are able to do causal inference. Learning causal relations in logical structure, or in neural networks, are important unsolved problems. Similarly, only Connectionists are able to explain modularity (function localization). Symbolist and Bayesian tribes are more normative than Connectionism, which makes their technologies tend towards (overly?) universal mechanisms.

Symbolic vs Subsymbolic

You’ve heard of the symbolic-subsymbolic debate? It’s about reconciling Symbolist and Connectionist interpretations of neuroscience. But some (e.g., [M01]) claim that both theories might be correct, but at different levels of abstraction. Marr [M82] once outlined a hierarchy of explanation, as follows:

- Computational: what is the structure of the task, and viable solutions?

- Algorithmic: what procedure should be carried out, in producing a solution?

- Implementation: what biological mechanism in the brain performs the work?

One theory, supported by [FP98] is that Symbolist architectures (e.g., ACT-R) may be valid explanations, but somehow “carried out” by Connectionist algorithms & representations.

I have put forward my own theory, that Symbolist representations are properties of the Algorithmic Mind; whereas Connectionism is more relevant in the Autonomic Mind.

This distinction may help us make sense for why [D15] proposes Markov Logic Networks (MLN) as a bridge between Symbolist logic and Bayesian graphical models. He is seeking to generalize these technologies into a single construct; in the hopes that he can later find a reduction of MLN in the Connectionist paradigm. Time will tell.

Takeaways

Today we discussed five tribes within ML research: Symbolists, Connectionists, Bayesians, Evolutionaries, and Analogists. Each tribe has different strengths, technologies, and developmental trajectory. These categories help to parse technical disputes, and locate promising research vectors.

The most significant problem facing ML research today is, how do we unify these tribes?

References

- [D15] Domingos (2015). The Master Algorithm

- [M01] Marcus (2001). The Algebraic Mind

- [M82] Marr (1982). Vision

- [FP98] Fodor & Pylyshyn (1998). Connectionism and cognitive architecture: A critical analysis

Hey Kevin,

I just want to say I’ve been reading you for about a year now and you’ve got a reader for life in me! The way you break down complex topics into short and easy to understand posts I love. I try to do in my more public-oriented posts. Anyway, can say, I’ve been outlining a post about a synthesis of the ML learning tribes. You helped me develop a better intuitive mental model for their differences. Anyway, I’ll definitely hit you up when I post it, aha.

– Aldritch

LikeLike

Hi Aldritch,

Thanks for the comment! I am looking forward to reading your synthesis re: ML tribes. 🙂

Kevin

LikeLike

Machine learning is the newest form of data analysis. This new technology helps to automate analytical model building. It uses algorithms that continuously assess and learn from data, machine learning enables computers to access hidden insights.

LikeLike