Part Of: Machine Learning sequence

Content Summary: 800 words, 8 min read

Projection as Geometric Approximation

If we have a vector and a line determined by vector

, how do we find the point on the line that is closest to

?

The closest point is at the intersection formed by a line through

that is orthogonal to

. If we think of

as an approximation to

, then the length of

is the error of that approximation.

This formula captures projection onto a vector. But what if you want to project to a higher dimensional surface?

Imagine a plane, whose basis vectors are and

. This plane can be described with a matrix, by mapping the basis vectors onto its column space:

Suppose we want to project vector onto this plane. We can use the same orthogonality principle as before:

Matrices like are self-transpositions. We have shown that such matrices are square symmetric, and thereby contain positive, real eigenvalues.

We shall assume that the columns of are independent, and it thereby is invertible. The inverse thereby allows us to solve for

:

Recall that,

Since matrices are linear transformations (functions that operate on vectors), it is natural to express the problem in terms of a projection matrix , that accepts a vector

, and outputs the approximating vector

:

By combining these two formula, we solve for :

Thus, we have two perspectives on the same underlying formula:

Linear Regression via Projection

We have previously noted that machine learning attempts to approximate the shape of the data. Prediction functions include classification (discrete output) and regression (continuous output).

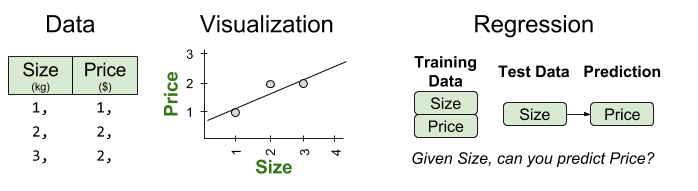

Consider an example with three data points. Can we predict the price of the next item, given its size?

For these data, a linear regression function will take the following form:

We can thus interpret linear regression as an attempt to solve :

In this example, we have more data than parameters (3 vs 2). In real-world problems, it is an extremely common predicament. It yields matrices with may more equations than unknowns. This means that has no solution (unless all data happen to fall on a straight line).

If exact solutions are impossible, we can still hope for an approximating solution. Perhaps we can find a vector p that best approximates b. More formally, we desire some such that the error

is minimized.

Since projection is a form of approximation, we can use a projection matrix to construct our linear prediction function .

A Worked Example

The solution is to make the error as small as possible. Since

can never leave the column space, choose the closest point to

in that subspace. This point is the projection

. Then the error vector

has minimal length.

To repeat, the best combination is the projection of b onto the column space. The error is perpendicular to that subspace. Therefore

is in the left nullspace:

We can use Guass-Jordan Elimination to compute the inversion:

A useful intermediate quantity is as follows:

We are now able to compute the parameters of our model, :

These parameters generate a predictive function with the following structure:

These values correspond with the line that best fits our original data!

Wrapping Up

Takeaways:

- In linear algebra, projection approximates a high-dimensional surface in a lower-dimensional space. The projection error can be measured.

- In linear regression, we usually cannot solve

, because there tends to be more data than parameters (

is not in the column space)

- We can find the closest vector in the column space by projecting onto

, and minimizing the projection error.

- Thus, the operation of projection can be used to perform parameter estimation, and produce a model that best approximates the training data.

Related Resources:

- This article is largely based on MIT lecture Projections onto Subspaces and Projection Matrices and Least Squares. If you’d like to test whether you understood this method, try the example problems for yourself!