Part Of: Neuroeconomics sequence

Followup To: Basal Ganglia as Action Selector, Intro to Behaviorism

Content Summary: 2600 words, 13 min read

Towards a theory of habit

Life brims with habitual behavior.

All our life is but a mass of habits—practical, emotional, and intellectual—systematically organized for our weal or woe, and bearing us irresistibly toward our destiny, whatever the latter may be.

Ninety-nine hundredths or, possibly, nine hundred and ninety-nine thousandths of our activity is purely automatic and habitual, from our rising in the morning to our lying down each night. Our dressing and undressing, our eating and drinking, our greetings and partings, our hat-raisings and giving way for ladies to precede, nay, even most of the forms of our common speech, are things of a type so fixed by repetition as almost to be classed as reflex actions.

William James

Why do we find ourselves on autopilot so frequently? What happens in our brain when we switch from reflexive to reflective thought? Is there a way to objectively tell which mode your brain is in, right now?

Our brain betray the program they employ by the errors we express.

When you flip on a light switch, your behavior could be a result of the desire for illumination coupled with the belief that a certain movement will lead to it. Sometimes, however, you just turn on the light habitually without anticipating the consequences – the very context of having arrived home in a dark room automatically triggers your reaching for the light switch. While these two cases may appear similar, they differ in the extent to which they are controlled by outcome expectancy. When the light switch is known to be broken, the habit might still persist whereas the goal-directed action might not.

Yin & Knowlton (2006)

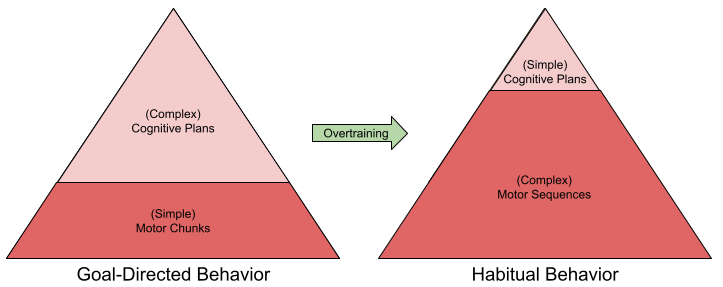

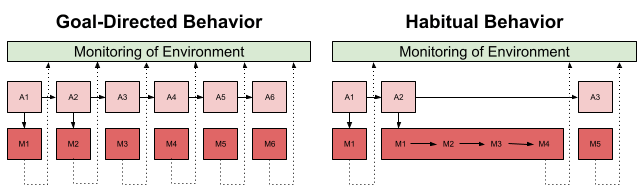

At a conceptual level, we can differentiate three cognitive phenomena: stimulus, response, and outcome. Habitual behavior uses the environment to guide its responses (an S-R map); goal-directed behavior directly optimizes the R-O relation. Goal-directed behavior occurs immediately. Habit emerges with overtraining.

In both behavioral modes, reward is used for day-by-day learning. But only goal-directed behavior is sensitive to rapid changes in the anticipated outcome. We can operationalize this with two metrics (Balleine & Dezfouli 2019):

- Outcome expectancy: is behavior sensitive to changes in the environment?

- Reward devaluation: is it sensitive to changes in intrinsic value?

Habitual behavior exhibits both.

- Sometimes, we flip the light switch despite knowing the causal path from the light switch to the bulb is severed.

- Sometimes, we open the refrigerator despite being full.

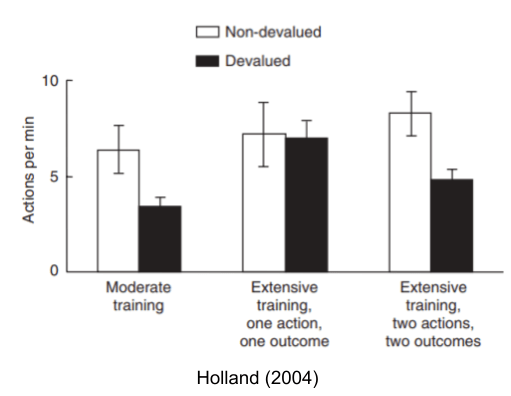

When a rat becomes sated, a moderately-trained rat will immediately reduce its reward-seeking behavior (e.g., press the lever fewer times). An extensively trained rat, however, will not respond to such devaluation events – a sign it is acting out of habit. Interestingly, habit only occurs in predictable environments. In a more complicated task, habit (and its index, devaluation sensitivity) does not occur.

Adjudicated Competition Theory: Model-Based vs Model-Free

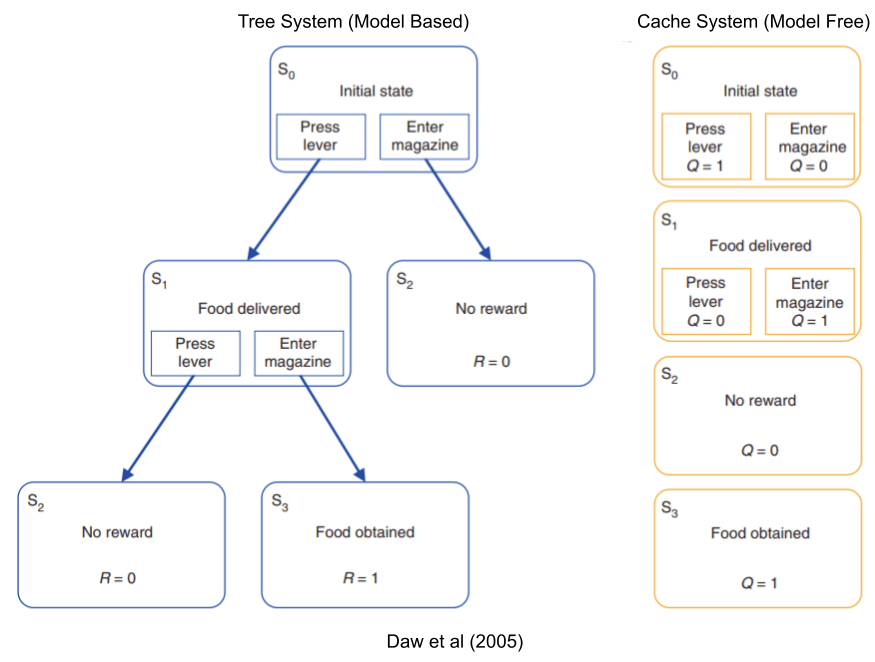

The basal ganglia is an action selector, giving exclusive motor access to the behavioral program with the strongest bid. We have already seen data connecting this structure with reinforcement learning (RL). But in the RL literature, there are two different ways to implement a learner: a tree system which builds an explicit world-model, and a cache system which ignores all that complexity, and just remembers stimulus-response pairings.

These two modeling approaches have different costs and benefits:

- Tree Systems are very costly to compute, but learn quickly & are more responsive to changes in the environment.

- Cache Systems are easier to maintain, but learn slowly & are less responsive.

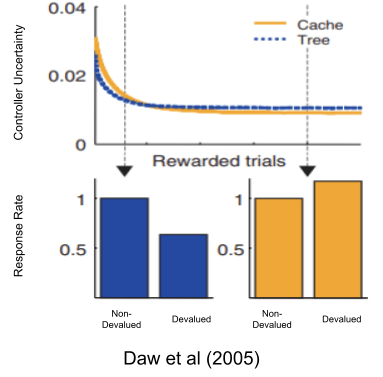

Besides driving behavior, both models also report their own uncertainty (i.e., error bars around the reward prediction). The adjudicated competition theory of habit (Daw et al 2005) suggests that the brain implements both models, and an adjudicator gives the reins to whichever model expresses the least uncertainty.

Because cache systems are more uncertain in novel environments (stemming from their low data efficiency), tree systems tend to predominate early. But as both systems learn, cache systems eventually become more relatively confident and take over behavioral control. This shift in relative uncertainty is thought to be the reason why our brains build habits if exposed to the same environment for a couple weeks.

Overtraining manufactures habits. But only sometimes! There are several quirks with our habit-generating machinery:

- Ratio intervals (which rewards behaviors as often as they are performed) tend to preclude habit formation. Interval training (which only provides a reward every so often) is much more habitogenic.

- Even interval training only generates habits in relatively simple circumstances: for certain tasks involves two actions, behavior can remain goal-directed indefinitely.

Amazingly, not only could Daw et al (2005) reproduce the basic phenomena of overtraining, but their model also reproduces these quirks as well!

Which two brain systems underlie goal-oriented and habitual behaviors, respectively? For that, we turn to the basal ganglia.

Three Loops: Sensorimotor, Associative, Limbic

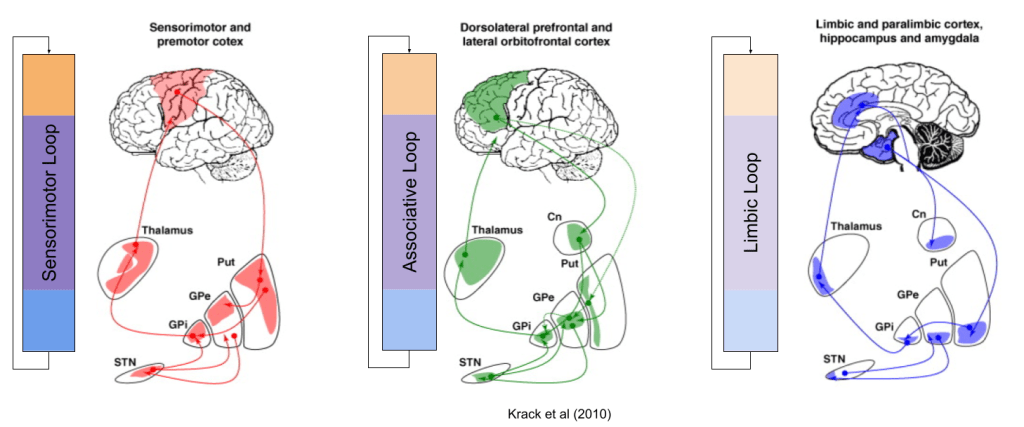

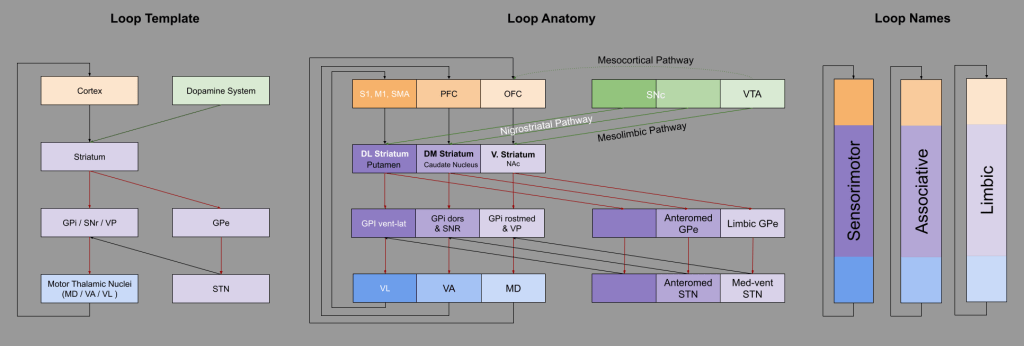

The striatum receives input from the entire cortex. As such, the fibers which comprise the basal ganglia are rather thick. As our tracing technologies matured, anatomists were able to inspect these tracts at higher resolutions. In the 1990s, it was discovered that this “bundle of fibers” actually comprised (at least) three parallel circuits.

These are called the Sensorimotor, Associative, and Limbic loops, based on their respective cortical afferents:

It’s important to note important differences between the rodent and human striatum.

The mesolimbic and nigrostriatal dopaminergic pathways, discussed above, directly map onto the Limbic and Sensorimotor/Associative loops, respectively:

All three circuits (direct, indirect, and hyperdirect) exist in all three loops (Nougaret et al, 2013); however, I omitted hyperdirect from the above for simplicity.

Given its participation in the Limbic Loop, the mesolimbic pathway is also sometimes referred to as the reward pathway. Its component structures, the ventral tegmental area (VTA) and nucleus accumbens (NAc), are particularly important.

There have been attempts to refine these loops into more specific circuits. Pauli et al (2016), using contrastive methods, found a different 5-network parcellation, which doesn’t overlap much with the former paper. Using resting state methods, Choi et al (2012) found five ICNs embedded within the striatum. More recently, Greene et al (2020) localized individual-specific ICNs within the cortical-basal ganglia-thalamic circuit.

For now, we will mostly confine ourselves to a discussion of three loops.

Localizing The Controllers

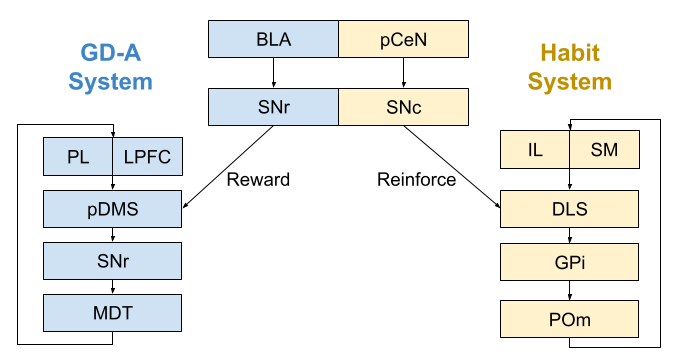

The associative loop appears to be the basis of the goal-directed action (GD-A) system. If you lesion any component of the system, behavior becomes exclusively habitual. For example, lesions to the posterior dorsomedial striatum (pDMS), behavior becomes insensitive to changes in both reward contingency and reward value. The same effects occurs with lesions to the SNR, and mediodorsal thalamus (MD). Finally, lesions to the basolateral amygdala (BLA) also disrupt goal-directed behavior, plausibly by altering the reward signal provided by the substantia nigra (SNr).

The sensorimotor loop appears to be the basis of the habit system. If you lesion any component of the system, behavior becomes exclusively goal-directed. For example, after lesions to the dorsolateral striatum (DLS), behavior begins to track changes in both reward contingency and reward value. The same effects occurs with lesions to the GPi, and mediodorsal thalamus (MD). Finally, lesions to the posterior central nucleus of the amygdala (pCeN) also disrupt habitual behavior, plausibly by altering the reinforcement signal provided by the substantia nigra (SNc).

These conclusions are derived from both human and rodent behavioral studies (Balleine & O’Doherty 2010). In normal circumstances, these systems interoperate seamlessly. Damage to the either system, however, causes exclusive reliance on the other system.

The infralimbic cortex (IL) plays an important role in habitual behavior. Lesions to this site prevent the formation of habit (Killcross & Coutureau 2003), and even blocks expression of already formed habits (Smith et al 2012). The IL also appears critical for the formation & retention of both Pavlovian and instrumental extinction (Barker et al 2014) But habit-related activity seems to develop first in the dorsomedial striatum and only with overtraining in the IL (Smith & Graybiel 2013). In a similar manner, the prelimbic cortex (PL) appears to play an important role in goal-directed behavior.

Action Chunks and Sequence Learning

We have defined habits with respect to outcome contingency and value. But there is a third component: the sequence learning of motor skills.

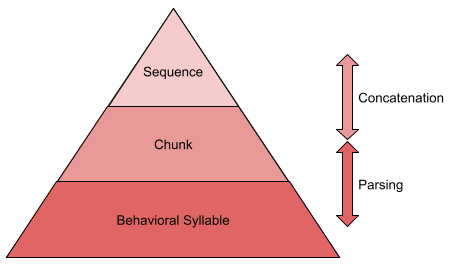

Behavior is not produced continuously. Rather, it is emitted in ~200ms atomic chunks, or behavioral syllables. Some 300 syllables have been discovered in mice (Wiltschko et al 2015).

Syllables are not emitted in random order. It often pays to use representations of multi-syllable action chunks. These chunks are, in turn, concatenated into larger sequences.

How do we know this? Chunks can be detected with response time measures: within-chunk actions occur more quickly than actions at chunk boundaries. Statistical methods also exist to detect sequence boundaries (Acuna et al 2014).

Concatenation and execution response times also respond to dissociable events. Execution latencies are preferentially impacted by changing the location of the hand relative to the body; concatenation latencies preferentially respond to transcranial magnetic stimulation (TMS) of the pre-SMA area (Abrahamse et al 2013).

Action chunks tend to emerge organically every three or four keypresses. There exists an interesting analogy here to memory chunks, for example, we remember phone numbers with three or four digit chunks. The similarity between action and memory chunks may derive from a common neurological substrate.

Neural activity in the dorsolateral striatum (DLS, part of the sensorimotor loop) exhibits an interesting task bracketing pattern: firing peaks at the beginning and end of tasks. Martiros et al (2018) find that striatal projection neurons (FPNs) generate this bracketing pattern, and are complemented by fast-spiking striatal interneurons (FSIs) which fire continuously within the bracketing window.

This bracketing saves rewarding behaviors as a package for reuse. D2 antagonists don’t interfere with well-learned sequences, but does disrupt the formation of new chunks (Levesque et al 2007). Parkinson’s disease does too (Tremblay et al 2010).

Graybiel & Grafton (2015) argue that the dorsolateral striatum is specifically involved in developing skills: learning action sequences of particular value to the organism. This explains why both habitual and non-habitual skills are learned in the DLS. Indeed, innate fixed action patterns (e.g., grooming) are mediated here too (Aldridge et al 2004).

The supplementary motor area (SMA) plays a central role in implementing sequences. Rats organize their behavior with sequence learning, and lesions to the SMA disrupt these behaviors (Ostlund et al 2009). Similarly, magnetically interrupting the human SMA during a task blocks expression of the subsequent chunk (Kennerly et al 2004).

Within the SMA, the rostral pre-SMA seems to represent cognitive sequences; the caudal SMA-proper exercises motor sequences (Cona & Semenza 2016). Working memory tasks reliably activates pre-SMA, whereas language production reliably activates both pre-SMA and SMA-proper.

Hierarchy as Loop Integration

We have so far examined theories of loop competition. But consider the impact of dopamine shortages and surpluses in the various loops, per Krack et al (2010):

This data aligns with the organizational principle of hierarchy of the central nervous system. The limbic loop selects a desire, the associative loop explores its beliefs to identify a plan, and the sensorimotor loop translates those plans into motor commands. Here’s one possible interpretation, based on Guyenet (2018).

This hierarchical interpretation nicely complements results from sequence learning.

Hierarchical Collaboration Theory

We have seen computational and neurological evidence in favor of the adjudicated competition theory of habit. But the theory also has three important limitations. First, competitive models can explain devaluation behaviors, but struggles to replicate contingency responses. Second, it doesn’t explain sequence learning: why should habits coincide with the development of motor skill? Third, it doesn’t accord with hierarchy: why should habitual behaviors be concrete responses, rather than abstract actions.

This leads us to the hierarchical collaboration theory of habit. On Balleine & Dezfouli (2019)‘s model, the associative system passes command serially to the sensorimotor system. Changes in the reward environment are noticed immediately. However, as the sensorimotor system learns increasingly complex action sequences, the associative system only notices changes to the reward environment at sequence boundaries. In other words, only after a sequence is being executed will the associative system resume control. This would explain why sequence learning so strongly coincides with habit formation and reward insensitivity.

In order to model this alternative account, one must first extend RL to accommodate chunks. These chunks replace their component parts if the benefits of using that sequence exceeds its costs. This formalism is provided by Dezfouli & Balleine (2012). Dezfouli & Balleine (2013) found that their hierarchical model replicated, and in some cases outperformed, the competition model of Daw et al (2011).

The Balleine lab is not the only group to produce computational models of hierarchical collaboration. Baladron & Hamker (2020) produces an interesting model, which assigns the infralimbic (IL) cortex the role of loop shortcut between associative/goal-directed and sensorimotor/habitual systems. Their model is also interesting in that they localize the reward prediction error (RPE) to the limbic loop, while ascribing action prediction error (APE) and movement prediction error (MPE) to the associative and sensorimotor loops, respectively.

These are early days. I look forward to more granular models of habituation, with more attention to the limbic circuit. As our mechanistic models of habit formation improve, so too does our therapeutic reach. If Graybiel & Grafton (2015) is right, and addictions are simply over-strong habits, such models may someday prove useful in clinical settings.

Until next time.

References

- Abrahamse et al (2013). Control of automated behavior: insights from the discrete sequence production task

- Acuna et al (2014). Multi-faceted aspects of chunking enable robust algorithms.

- Aldridge et al (2004). Basal ganglia neural mechanisms of natural movement sequences

- Baladron & Hamker (2020). Habit learning in hierarchical cortex-basal ganglia loops

- Balleine & Dezfouli (2019). Hierarchical action control: adaptive collaboration between actions and habits

- Balleine & Dickinson (1998). Goal-directed instrumental action: contingency and incentive learning and their cortical substrates.

- Balleine & O’Doherty (2010). Human and Rodent Homologies in Action Control: Corticostriatal Determinants of Goal-Directed and Habitual Action

- Barker et al (2014). A unifying model of the role of the infralimbic cortex in extinction and habits

- Choi et al (2012). The organization of the human striatum estimated by intrinsic functional connectivity

- Cona & Semenza (2016). Supplementary motor area as key structure for domain-general sequence processing: a unified account

- Daw et al (2011). Model-based influences on humans’ choices and striatal prediction errors

- Dezfouli & Balleine (2012) Habits, action sequences, and reinforcement learning

- Dezfouli & Balleine (2013). Actions, Action Sequences and Habits: Evidence That Goal-Directed and Habitual Action Control Are Hierarchically Organized

- Graybiel & Grafton (2015). The striatum: where skills and habits meet

- Greene et al (2020). Integrative and Network Specific Connectivity of the Basal Ganglia and Thalamus Defined in Individuals.

- Guyenet (2018). The Hungry Brain

- Holland (2004). Relations between Pavlovian-i9nstrumental transfer and reinforcer devaluation.

- Kesby et al (2018). Dopamine, psychosis and schizophrenia: the widening gap between basic and clinical neuroscience

- Krack et al (2010). Deep brain stimulation: from neurology to psychiatry?

- Levesque et al (2007). Raclopride-induced motor consolidation impairment in primates: role of the dopamine type-2 receptor in movement chunking into integrated sequences.

- Martiros et al (2018). Inversely Active Striatal Projection Neurons and Interneurons Selectively Delimit Useful Behavioral Sequences

- Pauli et al (2016). Regional specialization within the human striatum for diverse psychological functions

- Killcross & Coutureau (2003). Coordination of actions and habits in the medial prefrontal cortex of rats.

- Smith et al (2012). Reversible online control of habitual behavior by optogenetic perturbation of medial prefrontal cortex

- Smith & Graybiel (2013). A dual operator view of habitual behavior reflecting cortical and striatal dynamics

- Tremblay et al (2010). Movement chunking during sequence learning is a dopamine-dependent processs: a study conducted in Parkinson’s disease.

- Wiltschko et al (2015). Mapping Sub-Second Structure in Mouse Behavior

- Yin et al (2005) The role of the dorsomedial striatum in instrumental conditioning

I think I spot a mistake here:

“Because cache systems are more uncertain in novel environments (stemming from their low data efficiency), tree systems tend to predominate early. But as both systems learn, [b]tree[/b] systems eventually become more relatively confident and take over behavioral control.”

Happy to find your blog!

LikeLike

Nice catch! 🙂 Fixed.

LikeLike