Part Of: Algebra sequence

Followup To: Eigenvalues and Eigenvectors

Content Summary: 1300 words, 13 min read.

Limitations of Eigendecomposition

Last time, we learned how to locate eigenvalues and eigenvectors of a given matrix. Diagonalization is the process where a square matrix can be decomposed into two factors: a matrix (with eigenvectors along the columns) and a matrix

(with eigenvalues along the diagonal).

But as we saw with the spectral theorem, eigendecomposition only works well against square, symmetric matrices. If a matrix isn’t symmetric, it is easy to run into complex eigenvalues. And if a matrix isn’t square, you are out of luck entirely!

Can we generalize eigendecomposition to apply to a wider family of matrices? Can we diagonalize matrices of any size, even if they don’t have “nice” properties?

Yes. Self-transposition is the key insight of our “eigendecomposition 2.0”. We define the self-transpositions of as

and

.

Suppose . Then

and

. So these matrices are square. But they are also symmetric!

To illustrate, consider the following.

Since A is not symmetric, we have no guarantee that its eigenvalues are real. Indeed, its eigenvalues turn out to be complex:

Eigendecomposition on sucks. Are the self-transposed matrices any better?

These matrices are symmetric! Thus, they are better candidates for eigendecomposition.

Towards Singular Value Decomposition

Singular Value Decomposition (SVD) is based on the principle that all matrices are eigendecomposable after self-transposition. It is essentially a bug fix:

An important way to picture SVD is with the idea of orthogonal bases. It is relatively easy to find any number of orthogonal bases for a given rowspace. Call the matrix of orthogonal vectors .

We desire to find an orthogonal basis such that

produces an orthogonal basis in the column space. Orthogonal bases are not particularly hard to find. But most orthogonal bases, once projected to column space, will lose their orthogonality! We desire that particular orthogonal basis

such that

is also orthogonal.

We won’t require vectors in to be the same size as those in

. Instead, we will normalize

; its basis vectors will be orthonormal (orthogonal and normal). Then the length of each vector in

will diverge by a scaling factor.

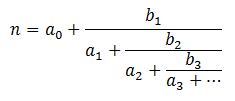

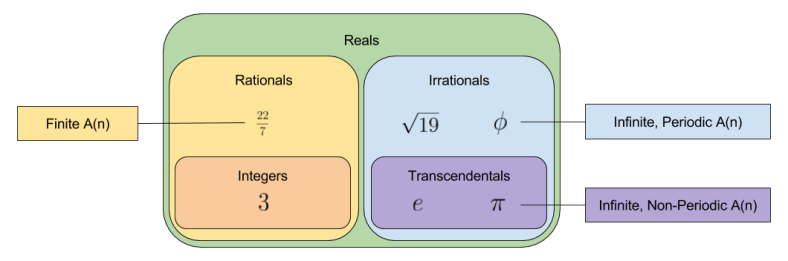

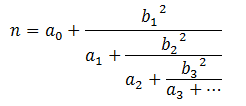

As we will soon see, these scaling factors are not eigenvalues. Instead, we will use sigmas instead of lambdas.

- Scaling factors

, analogous to eigenvalues

.

- Diagonal matrix

, analogous to diagonal matrix

Our full picture then, looks like this:

Let us now translate this image into matrix language.

But we can easily factorize the right-hand side:

So we have that:

Since both and

are orthogonal, inversion of either is equivalent to transposition:

This strongly resembles our diagonalization equation . SVD distinguishes itself by considering two orthogonal eigenmatrices

and

, not just one (

).

Recalling that ,

But now the innermost term cancels. Since is a square diagonal matrix, its self-transposition is simply equal to

. So,

Since is a square, symmetric matrix, our diagonalization theorem applies!

To find , a similar trick works:

The relationships between SVD and eigendecomposition are as follows:

is the eigenvectors of

is the eigenvectors of

is the square root of the eigenvalues matrix

If any eigenvalue is negative, the corresponding sigma factor would be complex. But and

are positive-semidefinite, which guarantees non-negative eigenvalues. This assures us that

contains only real values.

In contrast to eigendecomposition, every matrix has an SVD decomposition. Geometrically, and

act as rotational transformations, and Sigma acts as a scaling transformation. In other words, every linear transformation comprises a rotation, then scaling, then another rotation.

A Worked Example

Let’s revisit . Recall that:

Eigendecomposition against is unpleasant because

is not symmetric. But

is guaranteed to be positive semi-definite; that is, to have non-negative eigenvalues. Let’s see this in action.

These are positive, real eigenvalues. Perfect! Let’s now derive the corresponding (normalized) eigenvectors.

SVD intends to decompose into

. The above findings give us two of these ingredients.

What’s missing? ! To find it, we perform eigendecomposition on

. This is an especially easy task, because

is already a diagonal matrix.

We have arrived at our first Singular Value Decomposition.

Okay, so let’s check our work. 😛

These matrices are a viable factorization: multiplication successfully recovers .

Takeaways

- Eigendecomposition only works for a subclass of matrices; SVD decomposes all matrices.

- SVD relies on self-transposition to convert any arbitrary matrix into one that works well against eigendecomposition (guarantees square

and symmetric

).

- Another way to interpret SVD is by taking a special kind of orthogonal basis that, once passed through the linear transformation, preserves its orthogonality.

- Every matrix

. That is, every linear transformation can be conceived as rotation + scaling + rotation.

Until next time.